mirror of https://github.com/apache/druid.git

update docs

This commit is contained in:

parent

f1d705cb73

commit

771f89f495

|

|

@ -0,0 +1,142 @@

|

|||

---

|

||||

layout: doc_page

|

||||

---

|

||||

# Select Queries

|

||||

Select queries return raw Druid rows and support pagination.

|

||||

|

||||

```json

|

||||

{

|

||||

"queryType": "select",

|

||||

"dataSource": "wikipedia",

|

||||

"dimensions":[],

|

||||

"metrics":[],

|

||||

"granularity": "all",

|

||||

"intervals": [

|

||||

"2013-01-01/2013-01-02"

|

||||

],

|

||||

"pagingSpec":{"pagingIdentifiers": {}, "threshold":5}

|

||||

}

|

||||

```

|

||||

|

||||

There are several main parts to a select query:

|

||||

|

||||

|property|description|required?|

|

||||

|--------|-----------|---------|

|

||||

|queryType|This String should always be "select"; this is the first thing Druid looks at to figure out how to interpret the query|yes|

|

||||

|dataSource|A String defining the data source to query, very similar to a table in a relational database|yes|

|

||||

|intervals|A JSON Object representing ISO-8601 Intervals. This defines the time ranges to run the query over.|yes|

|

||||

|dimensions|The list of dimensions to select. If left empty, all dimensions are returned.|no|

|

||||

|metrics|The list of metrics to select. If left empty, all metrics are returned.|no|

|

||||

|pagingSpec|A JSON object indicating offsets into different scanned segments. Select query results will return a pagingSpec that can be reused for pagination.|yes|

|

||||

|context|An additional JSON Object which can be used to specify certain flags.|no|

|

||||

|

||||

The format of the result is:

|

||||

|

||||

```json

|

||||

[{

|

||||

"timestamp" : "2013-01-01T00:00:00.000Z",

|

||||

"result" : {

|

||||

"pagingIdentifiers" : {

|

||||

"wikipedia_2012-12-29T00:00:00.000Z_2013-01-10T08:00:00.000Z_2013-01-10T08:13:47.830Z_v9" : 4

|

||||

},

|

||||

"events" : [ {

|

||||

"segmentId" : "wikipedia_editstream_2012-12-29T00:00:00.000Z_2013-01-10T08:00:00.000Z_2013-01-10T08:13:47.830Z_v9",

|

||||

"offset" : 0,

|

||||

"event" : {

|

||||

"timestamp" : "2013-01-01T00:00:00.000Z",

|

||||

"robot" : "1",

|

||||

"namespace" : "article",

|

||||

"anonymous" : "0",

|

||||

"unpatrolled" : "0",

|

||||

"page" : "11._korpus_(NOVJ)",

|

||||

"language" : "sl",

|

||||

"newpage" : "0",

|

||||

"user" : "EmausBot",

|

||||

"count" : 1.0,

|

||||

"added" : 39.0,

|

||||

"delta" : 39.0,

|

||||

"variation" : 39.0,

|

||||

"deleted" : 0.0

|

||||

}

|

||||

}, {

|

||||

"segmentId" : "wikipedia_2012-12-29T00:00:00.000Z_2013-01-10T08:00:00.000Z_2013-01-10T08:13:47.830Z_v9",

|

||||

"offset" : 1,

|

||||

"event" : {

|

||||

"timestamp" : "2013-01-01T00:00:00.000Z",

|

||||

"robot" : "0",

|

||||

"namespace" : "article",

|

||||

"anonymous" : "0",

|

||||

"unpatrolled" : "0",

|

||||

"page" : "112_U.S._580",

|

||||

"language" : "en",

|

||||

"newpage" : "1",

|

||||

"user" : "MZMcBride",

|

||||

"count" : 1.0,

|

||||

"added" : 70.0,

|

||||

"delta" : 70.0,

|

||||

"variation" : 70.0,

|

||||

"deleted" : 0.0

|

||||

}

|

||||

}, {

|

||||

"segmentId" : "wikipedia_2012-12-29T00:00:00.000Z_2013-01-10T08:00:00.000Z_2013-01-10T08:13:47.830Z_v9",

|

||||

"offset" : 2,

|

||||

"event" : {

|

||||

"timestamp" : "2013-01-01T00:00:00.000Z",

|

||||

"robot" : "0",

|

||||

"namespace" : "article",

|

||||

"anonymous" : "0",

|

||||

"unpatrolled" : "0",

|

||||

"page" : "113_U.S._243",

|

||||

"language" : "en",

|

||||

"newpage" : "1",

|

||||

"user" : "MZMcBride",

|

||||

"count" : 1.0,

|

||||

"added" : 77.0,

|

||||

"delta" : 77.0,

|

||||

"variation" : 77.0,

|

||||

"deleted" : 0.0

|

||||

}

|

||||

}, {

|

||||

"segmentId" : "wikipedia_2012-12-29T00:00:00.000Z_2013-01-10T08:00:00.000Z_2013-01-10T08:13:47.830Z_v9",

|

||||

"offset" : 3,

|

||||

"event" : {

|

||||

"timestamp" : "2013-01-01T00:00:00.000Z",

|

||||

"robot" : "0",

|

||||

"namespace" : "article",

|

||||

"anonymous" : "0",

|

||||

"unpatrolled" : "0",

|

||||

"page" : "113_U.S._73",

|

||||

"language" : "en",

|

||||

"newpage" : "1",

|

||||

"user" : "MZMcBride",

|

||||

"count" : 1.0,

|

||||

"added" : 70.0,

|

||||

"delta" : 70.0,

|

||||

"variation" : 70.0,

|

||||

"deleted" : 0.0

|

||||

}

|

||||

}, {

|

||||

"segmentId" : "wikipedia_2012-12-29T00:00:00.000Z_2013-01-10T08:00:00.000Z_2013-01-10T08:13:47.830Z_v9",

|

||||

"offset" : 4,

|

||||

"event" : {

|

||||

"timestamp" : "2013-01-01T00:00:00.000Z",

|

||||

"robot" : "0",

|

||||

"namespace" : "article",

|

||||

"anonymous" : "0",

|

||||

"unpatrolled" : "0",

|

||||

"page" : "113_U.S._756",

|

||||

"language" : "en",

|

||||

"newpage" : "1",

|

||||

"user" : "MZMcBride",

|

||||

"count" : 1.0,

|

||||

"added" : 68.0,

|

||||

"delta" : 68.0,

|

||||

"variation" : 68.0,

|

||||

"deleted" : 0.0

|

||||

}

|

||||

} ]

|

||||

}

|

||||

} ]

|

||||

```

|

||||

|

||||

The result returns a global pagingSpec that can be reused for the next select query. The offset will need to be increased by 1 on the client side.

|

||||

|

|

@ -1,77 +1,91 @@

|

|||

---

|

||||

layout: doc_page

|

||||

---

|

||||

Greetings! We see you've taken an interest in Druid. That's awesome! Hopefully this tutorial will help clarify some core Druid concepts. We will go through one of the Real-time "Examples":Examples.html, and issue some basic Druid queries. The data source we'll be working with is the "Twitter spritzer stream":https://dev.twitter.com/docs/streaming-apis/streams/public. If you are ready to explore Druid, brave its challenges, and maybe learn a thing or two, read on!

|

||||

Greetings! We see you've taken an interest in Druid. That's awesome! Hopefully this tutorial will help clarify some core Druid concepts. We will go through one of the Real-time [Examples](Examples.html), and issue some basic Druid queries. The data source we'll be working with is the [Twitter spritzer stream](https://dev.twitter.com/docs/streaming-apis/streams/public). If you are ready to explore Druid, brave its challenges, and maybe learn a thing or two, read on!

|

||||

|

||||

h2. Setting Up

|

||||

# Setting Up

|

||||

|

||||

There are two ways to setup Druid: download a tarball, or build it from source.

|

||||

|

||||

h3. Download a Tarball

|

||||

# Download a Tarball

|

||||

|

||||

We've built a tarball that contains everything you'll need. You'll find it "here":http://static.druid.io/artifacts/releases/druid-services-0.6.98-bin.tar.gz.

|

||||

We've built a tarball that contains everything you'll need. You'll find it [here](http://static.druid.io/artifacts/releases/druid-services-0.6.98-bin.tar.gz).

|

||||

Download this bad boy to a directory of your choosing.

|

||||

|

||||

You can extract the awesomeness within by issuing:

|

||||

|

||||

pre. tar -zxvf druid-services-0.X.X.tar.gz

|

||||

```

|

||||

tar -zxvf druid-services-0.X.X.tar.gz

|

||||

```

|

||||

|

||||

Not too lost so far right? That's great! If you cd into the directory:

|

||||

|

||||

pre. cd druid-services-0.X.X

|

||||

```

|

||||

cd druid-services-0.X.X

|

||||

```

|

||||

|

||||

You should see a bunch of files:

|

||||

* run_example_server.sh

|

||||

* run_example_client.sh

|

||||

* LICENSE, config, examples, lib directories

|

||||

- run_example_server.sh

|

||||

- run_example_client.sh

|

||||

- LICENSE, config, examples, lib directories

|

||||

|

||||

h3. Clone and Build from Source

|

||||

# Clone and Build from Source

|

||||

|

||||

The other way to setup Druid is from source via git. To do so, run these commands:

|

||||

|

||||

<pre><code>git clone git@github.com:metamx/druid.git

|

||||

```

|

||||

git clone git@github.com:metamx/druid.git

|

||||

cd druid

|

||||

git checkout druid-0.X.X

|

||||

./build.sh

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

You should see a bunch of files:

|

||||

|

||||

<pre><code>DruidCorporateCLA.pdf README common examples indexer pom.xml server

|

||||

```

|

||||

DruidCorporateCLA.pdf README common examples indexer pom.xml server

|

||||

DruidIndividualCLA.pdf build.sh doc group_by.body install publications services

|

||||

LICENSE client eclipse_formatting.xml index-common merger realtime

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

You can find the example executables in the examples/bin directory:

|

||||

* run_example_server.sh

|

||||

* run_example_client.sh

|

||||

|

||||

h2. Running Example Scripts

|

||||

# Running Example Scripts

|

||||

|

||||

Let's start doing stuff. You can start a Druid "Realtime":Realtime.html node by issuing:

|

||||

<code>./run_example_server.sh</code>

|

||||

Let's start doing stuff. You can start a Druid [Realtime](Realtime.html) node by issuing:

|

||||

|

||||

```

|

||||

./run_example_server.sh

|

||||

```

|

||||

|

||||

Select "twitter".

|

||||

|

||||

You'll need to register a new application with the twitter API, which only takes a minute. Go to "https://twitter.com/oauth_clients/new":https://twitter.com/oauth_clients/new and fill out the form and submit. Don't worry, the home page and callback url can be anything. This will generate keys for the Twitter example application. Take note of the values for consumer key/secret and access token/secret.

|

||||

You'll need to register a new application with the twitter API, which only takes a minute. Go to [this link](https://twitter.com/oauth_clients/new":https://twitter.com/oauth_clients/new) and fill out the form and submit. Don't worry, the home page and callback url can be anything. This will generate keys for the Twitter example application. Take note of the values for consumer key/secret and access token/secret.

|

||||

|

||||

Enter your credentials when prompted.

|

||||

|

||||

Once the node starts up you will see a bunch of logs about setting up properties and connecting to the data source. If everything was successful, you should see messages of the form shown below. If you see crazy exceptions, you probably typed in your login information incorrectly.

|

||||

<pre><code>2013-05-17 23:04:40,934 INFO [main] org.mortbay.log - Started SelectChannelConnector@0.0.0.0:8080

|

||||

|

||||

```

|

||||

2013-05-17 23:04:40,934 INFO [main] org.mortbay.log - Started SelectChannelConnector@0.0.0.0:8080

|

||||

2013-05-17 23:04:40,935 INFO [main] com.metamx.common.lifecycle.Lifecycle$AnnotationBasedHandler - Invoking start method[public void com.metamx.druid.http.FileRequestLogger.start()] on object[com.metamx.druid.http.FileRequestLogger@42bb0406].

|

||||

2013-05-17 23:04:41,578 INFO [Twitter Stream consumer-1[Establishing connection]] twitter4j.TwitterStreamImpl - Connection established.

|

||||

2013-05-17 23:04:41,578 INFO [Twitter Stream consumer-1[Establishing connection]] io.druid.examples.twitter.TwitterSpritzerFirehoseFactory - Connected_to_Twitter

|

||||

2013-05-17 23:04:41,578 INFO [Twitter Stream consumer-1[Establishing connection]] twitter4j.TwitterStreamImpl - Receiving status stream.

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

Periodically, you'll also see messages of the form:

|

||||

<pre><code>2013-05-17 23:04:59,793 INFO [chief-twitterstream] io.druid.examples.twitter.TwitterSpritzerFirehoseFactory - nextRow() has returned 1,000 InputRows

|

||||

</code></pre>

|

||||

|

||||

```

|

||||

2013-05-17 23:04:59,793 INFO [chief-twitterstream] io.druid.examples.twitter.TwitterSpritzerFirehoseFactory - nextRow() has returned 1,000 InputRows

|

||||

```

|

||||

|

||||

These messages indicate you are ingesting events. The Druid real time-node ingests events in an in-memory buffer. Periodically, these events will be persisted to disk. Persisting to disk generates a whole bunch of logs:

|

||||

|

||||

<pre><code>2013-05-17 23:06:40,918 INFO [chief-twitterstream] com.metamx.druid.realtime.plumber.RealtimePlumberSchool - Submitting persist runnable for dataSource[twitterstream]

|

||||

```

|

||||

2013-05-17 23:06:40,918 INFO [chief-twitterstream] com.metamx.druid.realtime.plumber.RealtimePlumberSchool - Submitting persist runnable for dataSource[twitterstream]

|

||||

2013-05-17 23:06:40,920 INFO [twitterstream-incremental-persist] com.metamx.druid.realtime.plumber.RealtimePlumberSchool - DataSource[twitterstream], Interval[2013-05-17T23:00:00.000Z/2013-05-18T00:00:00.000Z], persisting Hydrant[FireHydrant{index=com.metamx.druid.index.v1.IncrementalIndex@126212dd, queryable=com.metamx.druid.index.IncrementalIndexSegment@64c47498, count=0}]

|

||||

2013-05-17 23:06:40,937 INFO [twitterstream-incremental-persist] com.metamx.druid.index.v1.IndexMerger - Starting persist for interval[2013-05-17T23:00:00.000Z/2013-05-17T23:07:00.000Z], rows[4,666]

|

||||

2013-05-17 23:06:41,039 INFO [twitterstream-incremental-persist] com.metamx.druid.index.v1.IndexMerger - outDir[/tmp/example/twitter_realtime/basePersist/twitterstream/2013-05-17T23:00:00.000Z_2013-05-18T00:00:00.000Z/0/v8-tmp] completed index.drd in 11 millis.

|

||||

|

|

@ -88,16 +102,20 @@ These messages indicate you are ingesting events. The Druid real time-node inges

|

|||

2013-05-17 23:06:41,425 INFO [twitterstream-incremental-persist] com.metamx.druid.index.v1.IndexIO$DefaultIndexIOHandler - Converting v8[/tmp/example/twitter_realtime/basePersist/twitterstream/2013-05-17T23:00:00.000Z_2013-05-18T00:00:00.000Z/0/v8-tmp] to v9[/tmp/example/twitter_realtime/basePersist/twitterstream/2013-05-17T23:00:00.000Z_2013-05-18T00:00:00.000Z/0]

|

||||

2013-05-17 23:06:41,426 INFO [twitterstream-incremental-persist]

|

||||

... ETC

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

The logs are about building different columns, probably not the most exciting stuff (they might as well be in Vulcan) if are you learning about Druid for the first time. Nevertheless, if you are interested in the details of our real-time architecture and why we persist indexes to disk, I suggest you read our "White Paper":http://static.druid.io/docs/druid.pdf.

|

||||

|

||||

Okay, things are about to get real (-time). To query the real-time node you've spun up, you can issue:

|

||||

<pre>./run_example_client.sh</pre>

|

||||

|

||||

Select "twitter" once again. This script issues ["GroupByQuery":GroupByQuery.html]s to the twitter data we've been ingesting. The query looks like this:

|

||||

```

|

||||

./run_example_client.sh

|

||||

```

|

||||

|

||||

<pre><code>{

|

||||

Select "twitter" once again. This script issues [GroupByQueries](GroupByQuery.html) to the twitter data we've been ingesting. The query looks like this:

|

||||

|

||||

```json

|

||||

{

|

||||

"queryType": "groupBy",

|

||||

"dataSource": "twitterstream",

|

||||

"granularity": "all",

|

||||

|

|

@ -109,13 +127,14 @@ Select "twitter" once again. This script issues ["GroupByQuery":GroupByQuery.htm

|

|||

"filter": { "type": "selector", "dimension": "lang", "value": "en" },

|

||||

"intervals":["2012-10-01T00:00/2020-01-01T00"]

|

||||

}

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

This is a **groupBy** query, which you may be familiar with from SQL. We are grouping, or aggregating, via the **dimensions** field: ["lang", "utc_offset"]. We are **filtering** via the **"lang"** dimension, to only look at english tweets. Our **aggregations** are what we are calculating: a row count, and the sum of the tweets in our data.

|

||||

|

||||

The result looks something like this:

|

||||

|

||||

<pre><code>[

|

||||

```json

|

||||

[

|

||||

{

|

||||

"version": "v1",

|

||||

"timestamp": "2012-10-01T00:00:00.000Z",

|

||||

|

|

@ -137,41 +156,48 @@ The result looks something like this:

|

|||

}

|

||||

},

|

||||

...

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

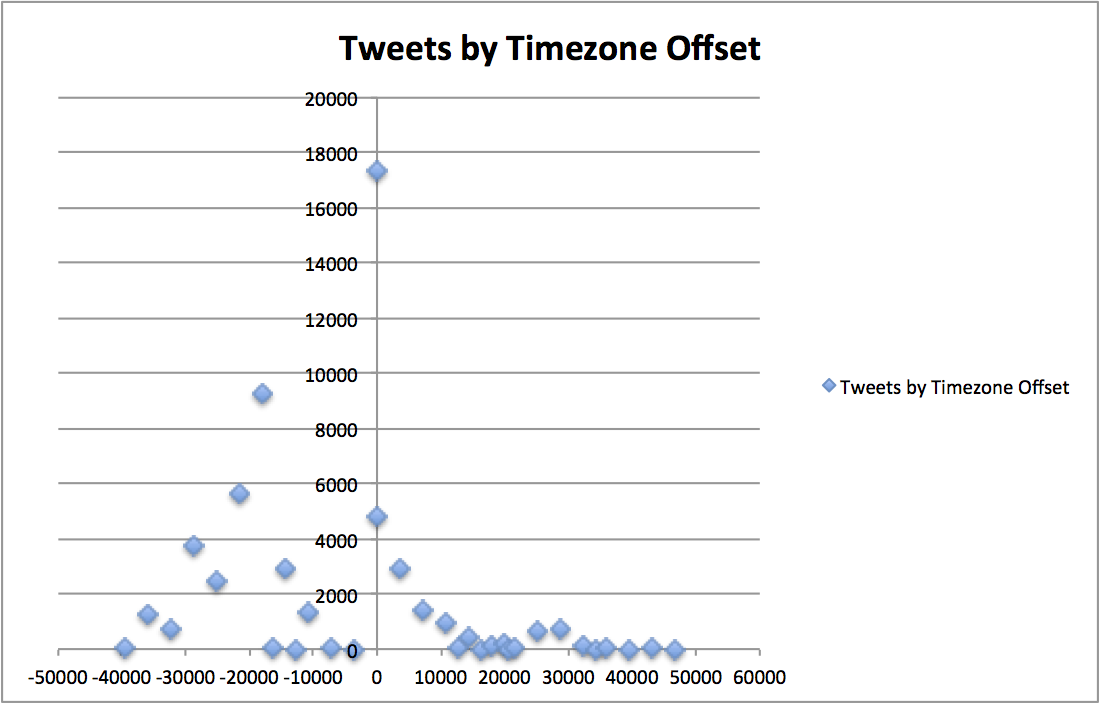

This data, plotted in a time series/distribution, looks something like this:

|

||||

|

||||

!http://metamarkets.com/wp-content/uploads/2013/06/tweets_timezone_offset.png(Timezone / Tweets Scatter Plot)!

|

||||

|

||||

|

||||

This groupBy query is a bit complicated and we'll return to it later. For the time being, just make sure you are getting some blocks of data back. If you are having problems, make sure you have "curl":http://curl.haxx.se/ installed. Control+C to break out of the client script.

|

||||

This groupBy query is a bit complicated and we'll return to it later. For the time being, just make sure you are getting some blocks of data back. If you are having problems, make sure you have [curl](http://curl.haxx.se/) installed. Control+C to break out of the client script.

|

||||

|

||||

h2. Querying Druid

|

||||

# Querying Druid

|

||||

|

||||

In your favorite editor, create the file:

|

||||

<pre>time_boundary_query.body</pre>

|

||||

|

||||

```

|

||||

time_boundary_query.body

|

||||

```

|

||||

|

||||

Druid queries are JSON blobs which are relatively painless to create programmatically, but an absolute pain to write by hand. So anyway, we are going to create a Druid query by hand. Add the following to the file you just created:

|

||||

<pre><code>{

|

||||

|

||||

```json

|

||||

{

|

||||

"queryType" : "timeBoundary",

|

||||

"dataSource" : "twitterstream"

|

||||

}

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

The "TimeBoundaryQuery":TimeBoundaryQuery.html is one of the simplest Druid queries. To run the query, you can issue:

|

||||

<pre><code>

|

||||

|

||||

```

|

||||

curl -X POST 'http://localhost:8080/druid/v2/?pretty' -H 'content-type: application/json' -d @time_boundary_query.body

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

We get something like this JSON back:

|

||||

|

||||

<pre><code>[ {

|

||||

```json

|

||||

{

|

||||

"timestamp" : "2013-06-10T19:09:00.000Z",

|

||||

"result" : {

|

||||

"minTime" : "2013-06-10T19:09:00.000Z",

|

||||

"maxTime" : "2013-06-10T20:50:00.000Z"

|

||||

}

|

||||

} ]

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

That's the result. What information do you think the result is conveying?

|

||||

...

|

||||

|

|

@ -179,11 +205,14 @@ If you said the result is indicating the maximum and minimum timestamps we've se

|

|||

|

||||

Return to your favorite editor and create the file:

|

||||

|

||||

<pre>timeseries_query.body</pre>

|

||||

```

|

||||

timeseries_query.body

|

||||

```

|

||||

|

||||

We are going to make a slightly more complicated query, the "TimeseriesQuery":TimeseriesQuery.html. Copy and paste the following into the file:

|

||||

We are going to make a slightly more complicated query, the [TimeseriesQuery](TimeseriesQuery.html). Copy and paste the following into the file:

|

||||

|

||||

<pre><code>{

|

||||

```json

|

||||

{

|

||||

"queryType":"timeseries",

|

||||

"dataSource":"twitterstream",

|

||||

"intervals":["2010-01-01/2020-01-01"],

|

||||

|

|

@ -193,22 +222,26 @@ We are going to make a slightly more complicated query, the "TimeseriesQuery":Ti

|

|||

{ "type": "doubleSum", "fieldName": "tweets", "name": "tweets"}

|

||||

]

|

||||

}

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

You are probably wondering, what are these "Granularities":Granularities.html and "Aggregations":Aggregations.html things? What the query is doing is aggregating some metrics over some span of time.

|

||||

You are probably wondering, what are these [Granularities](Granularities.html) and [Aggregations](Aggregations.html) things? What the query is doing is aggregating some metrics over some span of time.

|

||||

To issue the query and get some results, run the following in your command line:

|

||||

<pre><code>curl -X POST 'http://localhost:8080/druid/v2/?pretty' -H 'content-type: application/json' -d @timeseries_query.body</code></pre>

|

||||

|

||||

```

|

||||

curl -X POST 'http://localhost:8080/druid/v2/?pretty' -H 'content-type: application/json' -d @timeseries_query.body

|

||||

```

|

||||

|

||||

Once again, you should get a JSON blob of text back with your results, that looks something like this:

|

||||

|

||||

<pre><code>[ {

|

||||

```json

|

||||

[ {

|

||||

"timestamp" : "2013-06-10T19:09:00.000Z",

|

||||

"result" : {

|

||||

"tweets" : 358562.0,

|

||||

"rows" : 272271

|

||||

}

|

||||

} ]

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

If you issue the query again, you should notice your results updating.

|

||||

|

||||

|

|

@ -216,7 +249,8 @@ Right now all the results you are getting back are being aggregated into a singl

|

|||

|

||||

If you loudly exclaimed "we can change granularity to minute", you are absolutely correct again! We can specify different granularities to bucket our results, like so:

|

||||

|

||||

<pre><code>{

|

||||

```json

|

||||

{

|

||||

"queryType":"timeseries",

|

||||

"dataSource":"twitterstream",

|

||||

"intervals":["2010-01-01/2020-01-01"],

|

||||

|

|

@ -226,11 +260,12 @@ If you loudly exclaimed "we can change granularity to minute", you are absolutel

|

|||

{ "type": "doubleSum", "fieldName": "tweets", "name": "tweets"}

|

||||

]

|

||||

}

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

This gives us something like the following:

|

||||

|

||||

<pre><code>[ {

|

||||

```json

|

||||

[ {

|

||||

"timestamp" : "2013-06-10T19:09:00.000Z",

|

||||

"result" : {

|

||||

"tweets" : 2650.0,

|

||||

|

|

@ -250,16 +285,21 @@ This gives us something like the following:

|

|||

}

|

||||

},

|

||||

...

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

h2. Solving a Problem

|

||||

# Solving a Problem

|

||||

|

||||

One of Druid's main powers (see what we did there?) is to provide answers to problems, so let's pose a problem. What if we wanted to know what the top hash tags are, ordered by the number tweets, where the language is english, over the last few minutes you've been reading this tutorial? To solve this problem, we have to return to the query we introduced at the very beginning of this tutorial, the "GroupByQuery":GroupByQuery.html. It would be nice if we could group by results by dimension value and somehow sort those results... and it turns out we can!

|

||||

|

||||

Let's create the file:

|

||||

<pre>group_by_query.body</pre>

|

||||

|

||||

```

|

||||

group_by_query.body

|

||||

```

|

||||

and put the following in there:

|

||||

<pre><code>{

|

||||

|

||||

```json

|

||||

{

|

||||

"queryType": "groupBy",

|

||||

"dataSource": "twitterstream",

|

||||

"granularity": "all",

|

||||

|

|

@ -271,16 +311,20 @@ and put the following in there:

|

|||

"filter": {"type": "selector", "dimension": "lang", "value": "en" },

|

||||

"intervals":["2012-10-01T00:00/2020-01-01T00"]

|

||||

}

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

Woah! Our query just got a way more complicated. Now we have these "Filters":Filters.html things and this "OrderBy":OrderBy.html thing. Fear not, it turns out the new objects we've introduced to our query can help define the format of our results and provide an answer to our question.

|

||||

|

||||

If you issue the query:

|

||||

<pre><code>curl -X POST 'http://localhost:8080/druid/v2/?pretty' -H 'content-type: application/json' -d @group_by_query.body</code></pre>

|

||||

|

||||

```

|

||||

curl -X POST 'http://localhost:8080/druid/v2/?pretty' -H 'content-type: application/json' -d @group_by_query.body

|

||||

```

|

||||

|

||||

You should hopefully see an answer to our question. For my twitter stream, it looks like this:

|

||||

|

||||

<pre><code>[ {

|

||||

```json

|

||||

[ {

|

||||

"version" : "v1",

|

||||

"timestamp" : "2012-10-01T00:00:00.000Z",

|

||||

"event" : {

|

||||

|

|

@ -316,12 +360,12 @@ You should hopefully see an answer to our question. For my twitter stream, it lo

|

|||

"htags" : "IDidntTextYouBackBecause"

|

||||

}

|

||||

} ]

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

Feel free to tweak other query parameters to answer other questions you may have about the data.

|

||||

|

||||

h2. Additional Information

|

||||

# Additional Information

|

||||

|

||||

This tutorial is merely showcasing a small fraction of what Druid can do. Next, continue on to "The Druid Cluster":./Tutorial:-The-Druid-Cluster.html.

|

||||

This tutorial is merely showcasing a small fraction of what Druid can do. Next, continue on to [The Druid Cluster](./Tutorial:-The-Druid-Cluster.html).

|

||||

|

||||

And thus concludes our journey! Hopefully you learned a thing or two about Druid real-time ingestion, querying Druid, and how Druid can be used to solve problems. If you have additional questions, feel free to post in our "google groups page":http://www.groups.google.com/forum/#!forum/druid-development.

|

||||

And thus concludes our journey! Hopefully you learned a thing or two about Druid real-time ingestion, querying Druid, and how Druid can be used to solve problems. If you have additional questions, feel free to post in our [google groups page](http://www.groups.google.com/forum/#!forum/druid-development).

|

||||

|

|

@ -75,6 +75,7 @@ h2. Architecture

|

|||

h2. Experimental

|

||||

* "About Experimental Features":./About-Experimental-Features.html

|

||||

* "Geographic Queries":./GeographicQueries.html

|

||||

* "Select Query":./SelectQuery.html

|

||||

|

||||

h2. Development

|

||||

* "Versioning":./Versioning.html

|

||||

|

|

|

|||

Loading…

Reference in New Issue