is update adds logging for changes made in the AI admin panel. When making configuration changes to Embeddings, LLMs, Personas, Tools, or Spam that aren't site setting related, changes will now be logged in Admin > Logs & Screening. This will help admins debug issues related to AI. In this update a helper lib is created called `AiStaffActionLogger` which can be easily used in the future to add logging support for any other admin config we need logged for AI.

## 🔍 Overview

When the title suggestions return no suggestions, there is no indication in the UI. In the tag suggester we show a toast when there aren't any suggestions but the request was a success. In this update we make a similar UI indication with a toast for both category and title suggestions. Additionally, for all suggestions we add a "Try again" button to the toast so that suggestions can be generated again if the results yield nothing the first time.

OpenAI ship a new API for completions called "Responses API"

Certain models (o3-pro) require this API.

Additionally certain features are only made available to the new API.

This allow enabling it per LLM.

see: https://platform.openai.com/docs/api-reference/responses

Introduces a persistent, user-scoped key-value storage system for

AI Artifacts, enabling them to be stateful and interactive. This

transforms artifacts from static content into mini-applications that can

save user input, preferences, and other data.

The core components of this feature are:

1. **Model and API**:

- A new `AiArtifactKeyValue` model and corresponding database table to

store data associated with a user and an artifact.

- A new `ArtifactKeyValuesController` provides a RESTful API for

CRUD operations (`index`, `set`, `destroy`) on the key-value data.

- Permissions are enforced: users can only modify their own data but

can view public data from other users.

2. **Secure JavaScript Bridge**:

- A `postMessage` communication bridge is established between the

sandboxed artifact `iframe` and the parent Discourse window.

- A JavaScript API is exposed to the artifact as `window.discourseArtifact`

with async methods: `get(key)`, `set(key, value, options)`,

`delete(key)`, and `index(filter)`.

- The parent window handles these requests, makes authenticated calls to the

new controller, and returns the results to the iframe. This ensures

security by keeping untrusted JS isolated.

3. **AI Tool Integration**:

- The `create_artifact` tool is updated with a `requires_storage`

boolean parameter.

- If an artifact requires storage, its metadata is flagged, and the

system prompt for the code-generating AI is augmented with detailed

documentation for the new storage API.

4. **Configuration**:

- Adds hidden site settings `ai_artifact_kv_value_max_length` and

`ai_artifact_max_keys_per_user_per_artifact` for throttling.

This also includes a minor fix to use `jsonb_set` when updating

artifact metadata, ensuring other metadata fields are preserved.

* FEATURE: Display features that rely on multiple personas.

This change makes the previously hidden feature page visible while displaying features, like the AI helper, which relies on multiple personas.

* Fix system specs

* Small fix, reasoning is now available on Claude 4 models

* fix invalid filters should raise, topic filter not working

* fix spec so we are consistent

Examples simulate previous interactions with an LLM and come

right after the system prompt. This helps grounding the model and

producing better responses.

* DEV: Use structured responses for summaries

* Fix system specs

* Make response_format a first class citizen and update endpoints to support it

* Response format can be specified in the persona

* lint

* switch to jsonb and make column nullable

* Reify structured output chunks. Move JSON parsing to the depths of Completion

* Switch to JsonStreamingTracker for partial JSON parsing

This PR addresses a bug where uploads weren't being cleared after successfully posting a new private message in the AI bot conversations interface. Here's what the changes do:

## Main Fix:

- Makes the `prepareAndSubmitToBot()` method async and adds proper error handling

- Adds `this.uploads.clear()` after successful submission to clear all uploads

- Adds a test to verify that the "New Question" button properly resets the UI with no uploads

## Additional Improvements:

1. **Dynamic Character Length Validation**:

- Uses `siteSettings.min_personal_message_post_length` instead of hardcoded 10 characters

- Updates the error message to show the dynamic character count

- Adds proper pluralization in the localization file for the error message

2. **Bug Fixes**:

- Adds null checks with optional chaining (`link?.topic?.id`) in the sidebar code to prevent potential errors

3. **Code Organization**:

- Moves error handling from the service to the controller for better separation of concerns

This commit adds an empty state when the user doesn't have any PM history. It ALSO retains the new conversation button in the sidebar so it no longer jumps. The button is disabled, icon, and text are all updated.

# Preview

https://github.com/user-attachments/assets/3fe3ac8f-c938-4df4-9afe-11980046944d

# Details

- Group pms by `last_posted_at`. In this first iteration we are group by `7 days`, `30 days`, then by month beyond that.

- I inject a sidebar section link with the relative (last_posted_at) date and then update a tracked value to ensure we don't do it again. Then for each month beyond the first 30days, I add a value to the `loadedMonthLabels` set and we reference that (plus the year) to see if we need to load a new month label.

- I took the creative liberty to remove the `Conversations` section label - this had no purpose

- I hid the _collapse all sidebar sections_ carrot. This had no purpose.

- Swap `BasicTopicSerializer` to `ListableTopicSerializer` to get access to `last_posted_at`

* FEATURE: display more places where AI is used

- Usage was not showing automation or image caption in llm list.

- Also: FIX - reasoning models would time out incorrectly after 60 seconds (raised to 10 minutes)

* correct enum not to enumerate non configured models

* FEATURE: implement chat streamer

This implements a basic chat streamer, it provides 2 things:

1. Gives feedback to the user when LLM is generating

2. Streams stuff much more efficiently to client (given it may take 100ms or so per call to update chat)

This PR is a retry of: #1135, where we migrate AiTools form to FormKit. The previous PR accidentally removed code related to setting enum values, and as a result was reverted. This update includes enums correctly along with the previous updates.

Overview

This PR introduces a Bot Homepage that was first introduced at https://ask.discourse.org/.

Key Features:

Add a bot homepage: /discourse-ai/ai-bot/conversations

Display a sidebar with previous bot conversations

Infinite scroll for large counts

Sidebar still visible when navigation mode is header_dropdown

Sidebar visible on homepage and bot PM show view

Add New Question button to the bottom of sidebar on bot PM show view

Add persona picker to homepage

This update adds metrics for estimated spending in AI usage. To make use of it, admins must add cost details to the LLM config page (input, output, and cached input costs per 1M tokens). After doing so, the metrics will appear in the AI usage dashboard as the AI plugin is used.

### 🔍 Overview

This update performs some enhancements to the LLM configuration screen. In particular, it renames the UI for the number of tokens for the prompt to "Context window" since the naming can be confusing to the user. Additionally, it adds a new optional field called "Max output tokens".

* FEATURE: Update model names and specs

- not a bug, but made it explicit that tools and thinking are not a chat thing

- updated all models to latest in presets (Gemini and OpenAI)

* allow larger context windows

In this feature update, we add the UI for the ability to easily configure persona backed AI-features. The feature will still be hidden until structured responses are complete.

Add API methods to AI tools for reading and updating personas, enabling

more flexible AI workflows. This allows custom tools to:

- Fetch persona information through discourse.getPersona()

- Update personas with modified settings via discourse.updatePersona()

- Also update using persona.update()

These APIs enable new use cases like "trainable" moderation bots, where

users with appropriate permissions can set and refine moderation rules

through direct chat interactions, without needing admin panel access.

Also adds a special API scope which allows people to lean on API

for similar actions

Additionally adds a rather powerful hidden feature can allow custom tools

to inject content into the context unconditionally it can be used for memory and similar features

This feature update allows for continuing the conversation with Discobot Discoveries in an AI bot chat. After discoveries gives you a response to your search you can continue with the existing context.

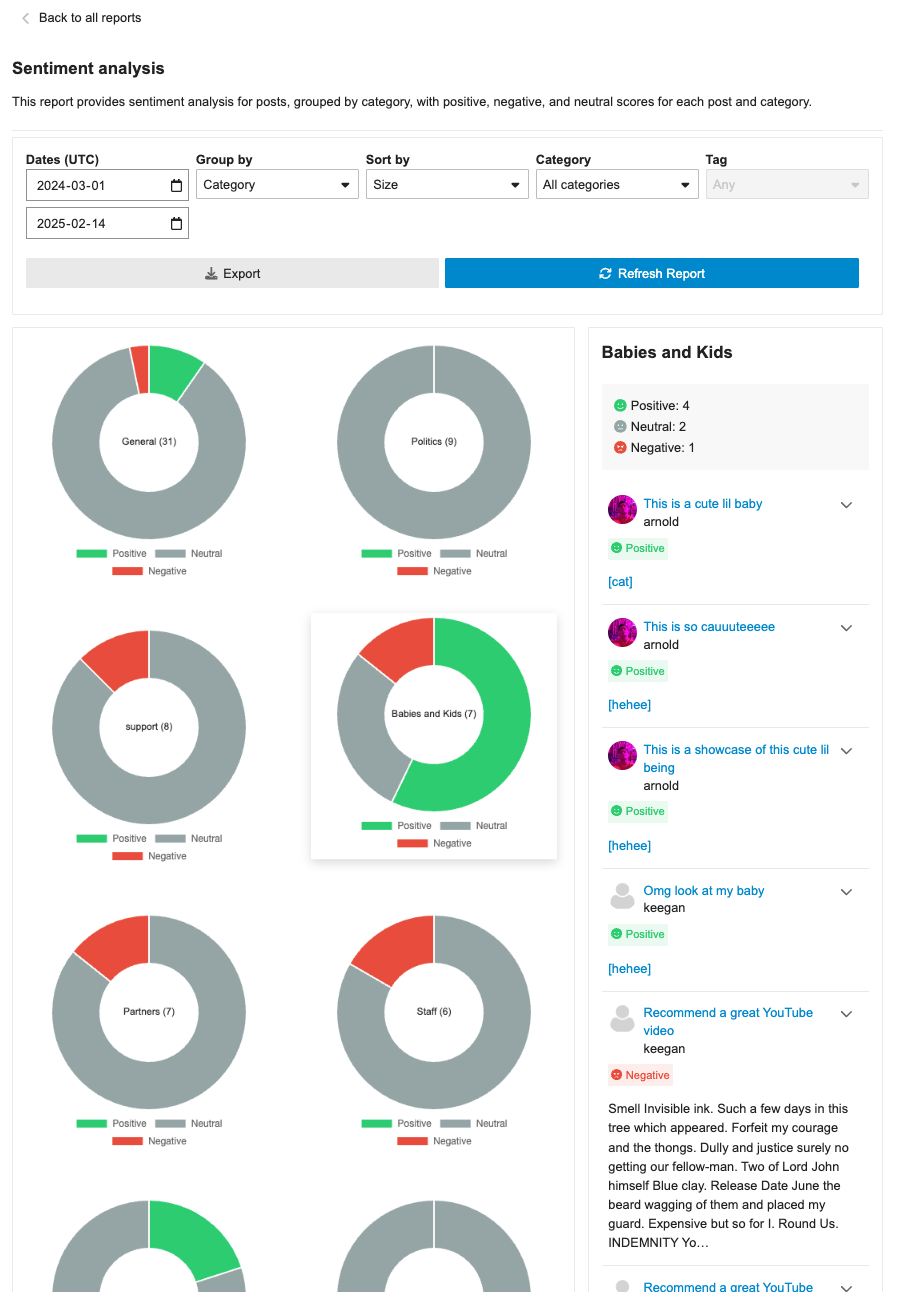

The sentiment analysis report page initially showcases sentiments via category/tag by doughnut visualizations. However, this isn't an optimal view for quickly scanning and comparing each result. This PR updates the overview to include a table visualization with horizontal bars to represent sentiment analysis instead of doughnuts. Doughnut visualizations are still maintained however when accessing the sentiment data in the drill down for individual entries.

This approach is an intermediary step, as we will eventually add whole clustering and sizing visualization instead of a table. As such, no relevant tests are added in this PR.

* REFACTOR: Migrate Personas' form to FormKit

We re-arranged fields into sections so we can better differentiate which options are specific to the AI bot.

* few form-kit improvements

https://github.com/discourse/discourse/pull/31934

---------

Co-authored-by: Joffrey JAFFEUX <j.jaffeux@gmail.com>

This allows for a new mode in persona triage where nothing is posted on topics.

This allows people to perform all triage actions using tools

Additionally introduces new APIs to create chat messages from tools which can be useful in certain moderation scenarios

Co-authored-by: Natalie Tay <natalie.tay@gmail.com>

* remove TODO code

---------

Co-authored-by: Natalie Tay <natalie.tay@gmail.com>

thinking models such as Claude 3.7 Thinking and o1 / o3 do not

support top_p or temp.

Previously you would have to carefully remove it from everywhere

by having it be a provider param we now support blanker removing

without forcing people to update automation rules or personas

This update adds the ability to disable search discoveries. This can be done through a tooltip when search discoveries are shown. It can also be done in the AI user preferences, which has also been updated to accommodate more than just the one image caption setting.

This PR enhances the LLM triage automation with several important improvements:

- Add ability to use AI personas for automated replies instead of canned replies

- Add support for whisper responses

- Refactor LLM persona reply functionality into a reusable method

- Add new settings to configure response behavior in automations

- Improve error handling and logging

- Fix handling of personal messages in the triage flow

- Add comprehensive test coverage for new features

- Make personas configurable with more flexible requirements

This allows for more dynamic and context-aware responses in automated workflows, with better control over visibility and attribution.

## LLM Persona Triage

- Allows automated responses to posts using AI personas

- Configurable to respond as regular posts or whispers

- Adds context-aware formatting for topics and private messages

- Provides special handling for topic metadata (title, category, tags)

## LLM Tool Triage

- Enables custom AI tools to process and respond to posts

- Tools can analyze post content and invoke personas when needed

- Zero-parameter tools can be used for automated workflows

- Not enabled in production yet

## Implementation Details

- Added new scriptable registration in discourse_automation/ directory

- Created core implementation in lib/automation/ modules

- Enhanced PromptMessagesBuilder with topic-style formatting

- Added helper methods for persona and tool selection in UI

- Extended AI Bot functionality to support whisper responses

- Added rate limiting to prevent abuse

## Other Changes

- Added comprehensive test coverage for both automation types

- Enhanced tool runner with LLM integration capabilities

- Improved error handling and logging

This feature allows forum admins to configure AI personas to automatically respond to posts based on custom criteria and leverage AI tools for more complex triage workflows.

Tool Triage has been disabled in production while we finalize details of new scripting capabilities.

**This PR includes a variety of updates to the Sentiment Analysis report:**

- [X] Conditionally showing sentiment reports based on `sentiment_enabled` setting

- [X] Sentiment reports should only be visible in sidebar if data is in the reports

- [X] Fix infinite loading of posts in drill down

- [x] Fix markdown emojis showing not showing as emoji representation

- [x] Drill down of posts should have URL

- [x] ~~Different doughnut sizing based on post count~~ [reverting and will address in follow-up (see: `/t/146786/47`)]

- [X] Hide non-functional export button

- [X] Sticky drill down filter nav

adds support for "thinking tokens" - a feature that exposes the model's reasoning process before providing the final response. Key improvements include:

- Add a new Thinking class to handle thinking content from LLMs

- Modify endpoints (Claude, AWS Bedrock) to handle thinking output

- Update AI bot to display thinking in collapsible details section

- Fix SEARCH/REPLACE blocks to support empty replacement strings and general improvements to artifact editing

- Allow configurable temperature in triage and report automations

- Various bug fixes and improvements to diff parsing

* FEATURE: full support for Sonnet 3.7

- Adds support for Sonnet 3.7 with reasoning on bedrock and anthropic

- Fixes regression where provider params were not populated

Note. reasoning tokens are hardcoded to minimum of 100 maximum of 65536

* FIX: open ai non reasoning models need to use deprecate max_tokens

* FEATURE: Experimental search results from an AI Persona.

When a user searches discourse, we'll send the query to an AI Persona to provide additional context and enrich the results. The feature depends on the user being a member of a group to which the persona has access.

* Update assets/stylesheets/common/ai-blinking-animation.scss

Co-authored-by: Keegan George <kgeorge13@gmail.com>

---------

Co-authored-by: Keegan George <kgeorge13@gmail.com>

## 🔍 Overview

This update adds a new report page at `admin/reports/sentiment_analysis` where admins can see a sentiment analysis report for the forum grouped by either category or tags.

## ➕ More details

The report can breakdown either category or tags into positive/negative/neutral sentiments based on the grouping (category/tag). Clicking on the doughnut visualization will bring up a post list of all the posts that were involved in that classification with further sentiment classifications by post.

The report can additionally be sorted in alphabetical order or by size, as well as be filtered by either category/tag based on the grouping.

## 👨🏽💻 Technical Details

The new admin report is registered via the pluginAPi with `api.registerReportModeComponent` to register the custom sentiment doughnut report. However, when each doughnut visualization is clicked, a new endpoint found at: `/discourse-ai/sentiment/posts` is fetched to showcase posts classified by sentiments based on the respective params.

## 📸 Screenshots

* FEATURE: Native PDF support

This amends it so we use PDF Reader gem to extract text from PDFs

* This means that our simple pdf eval passes at last

* fix spec

* skip test in CI

* test file support

* Update lib/utils/image_to_text.rb

Co-authored-by: Alan Guo Xiang Tan <gxtan1990@gmail.com>

* address pr comments

---------

Co-authored-by: Alan Guo Xiang Tan <gxtan1990@gmail.com>

This PR introduces several enhancements and refactorings to the AI Persona and RAG (Retrieval-Augmented Generation) functionalities within the discourse-ai plugin. Here's a breakdown of the changes:

**1. LLM Model Association for RAG and Personas:**

- **New Database Columns:** Adds `rag_llm_model_id` to both `ai_personas` and `ai_tools` tables. This allows specifying a dedicated LLM for RAG indexing, separate from the persona's primary LLM. Adds `default_llm_id` and `question_consolidator_llm_id` to `ai_personas`.

- **Migration:** Includes a migration (`20250210032345_migrate_persona_to_llm_model_id.rb`) to populate the new `default_llm_id` and `question_consolidator_llm_id` columns in `ai_personas` based on the existing `default_llm` and `question_consolidator_llm` string columns, and a post migration to remove the latter.

- **Model Changes:** The `AiPersona` and `AiTool` models now `belong_to` an `LlmModel` via `rag_llm_model_id`. The `LlmModel.proxy` method now accepts an `LlmModel` instance instead of just an identifier. `AiPersona` now has `default_llm_id` and `question_consolidator_llm_id` attributes.

- **UI Updates:** The AI Persona and AI Tool editors in the admin panel now allow selecting an LLM for RAG indexing (if PDF/image support is enabled). The RAG options component displays an LLM selector.

- **Serialization:** The serializers (`AiCustomToolSerializer`, `AiCustomToolListSerializer`, `LocalizedAiPersonaSerializer`) have been updated to include the new `rag_llm_model_id`, `default_llm_id` and `question_consolidator_llm_id` attributes.

**2. PDF and Image Support for RAG:**

- **Site Setting:** Introduces a new hidden site setting, `ai_rag_pdf_images_enabled`, to control whether PDF and image files can be indexed for RAG. This defaults to `false`.

- **File Upload Validation:** The `RagDocumentFragmentsController` now checks the `ai_rag_pdf_images_enabled` setting and allows PDF, PNG, JPG, and JPEG files if enabled. Error handling is included for cases where PDF/image indexing is attempted with the setting disabled.

- **PDF Processing:** Adds a new utility class, `DiscourseAi::Utils::PdfToImages`, which uses ImageMagick (`magick`) to convert PDF pages into individual PNG images. A maximum PDF size and conversion timeout are enforced.

- **Image Processing:** A new utility class, `DiscourseAi::Utils::ImageToText`, is included to handle OCR for the images and PDFs.

- **RAG Digestion Job:** The `DigestRagUpload` job now handles PDF and image uploads. It uses `PdfToImages` and `ImageToText` to extract text and create document fragments.

- **UI Updates:** The RAG uploader component now accepts PDF and image file types if `ai_rag_pdf_images_enabled` is true. The UI text is adjusted to indicate supported file types.

**3. Refactoring and Improvements:**

- **LLM Enumeration:** The `DiscourseAi::Configuration::LlmEnumerator` now provides a `values_for_serialization` method, which returns a simplified array of LLM data (id, name, vision_enabled) suitable for use in serializers. This avoids exposing unnecessary details to the frontend.

- **AI Helper:** The `AiHelper::Assistant` now takes optional `helper_llm` and `image_caption_llm` parameters in its constructor, allowing for greater flexibility.

- **Bot and Persona Updates:** Several updates were made across the codebase, changing the string based association to a LLM to the new model based.

- **Audit Logs:** The `DiscourseAi::Completions::Endpoints::Base` now formats raw request payloads as pretty JSON for easier auditing.

- **Eval Script:** An evaluation script is included.

**4. Testing:**

- The PR introduces a new eval system for LLMs, this allows us to test how functionality works across various LLM providers. This lives in `/evals`