mirror of https://github.com/apache/druid.git

Merge branch 'master' of github.com:metamx/druid into move-firehose

This commit is contained in:

commit

eef034ca7e

Binary file not shown.

Binary file not shown.

2

build.sh

2

build.sh

|

|

@ -30,4 +30,4 @@ echo "For examples, see: "

|

|||

echo " "

|

||||

ls -1 examples/*/*sh

|

||||

echo " "

|

||||

echo "See also http://druid.io/docs/0.6.81"

|

||||

echo "See also http://druid.io/docs/latest"

|

||||

|

|

|

|||

|

|

@ -28,7 +28,7 @@

|

|||

<parent>

|

||||

<groupId>io.druid</groupId>

|

||||

<artifactId>druid</artifactId>

|

||||

<version>0.6.83-SNAPSHOT</version>

|

||||

<version>0.6.102-SNAPSHOT</version>

|

||||

</parent>

|

||||

|

||||

<dependencies>

|

||||

|

|

|

|||

|

|

@ -28,7 +28,7 @@

|

|||

<parent>

|

||||

<groupId>io.druid</groupId>

|

||||

<artifactId>druid</artifactId>

|

||||

<version>0.6.83-SNAPSHOT</version>

|

||||

<version>0.6.102-SNAPSHOT</version>

|

||||

</parent>

|

||||

|

||||

<dependencies>

|

||||

|

|

|

|||

|

|

@ -167,9 +167,20 @@ For example, data for a day may be split by the dimension "last\_name" into two

|

|||

In hashed partition type, the number of partitions is determined based on the targetPartitionSize and cardinality of input set and the data is partitioned based on the hashcode of the row.

|

||||

|

||||

It is recommended to use Hashed partition as it is more efficient than singleDimension since it does not need to determine the dimension for creating partitions.

|

||||

Hashing also gives better distribution of data resulting in equal sized partitons and improving query performance

|

||||

Hashing also gives better distribution of data resulting in equal sized partitions and improving query performance

|

||||

|

||||

To use this option, the indexer must be given a target partition size. It can then find a good set of partition ranges on its own.

|

||||

To use this druid to automatically determine optimal partitions indexer must be given a target partition size. It can then find a good set of partition ranges on its own.

|

||||

|

||||

#### Configuration for disabling auto-sharding and creating Fixed number of partitions

|

||||

Druid can be configured to NOT run determine partitions and create a fixed number of shards by specifying numShards in hashed partitionsSpec.

|

||||

e.g This configuration will skip determining optimal partitions and always create 4 shards for every segment granular interval

|

||||

|

||||

```json

|

||||

"partitionsSpec": {

|

||||

"type": "hashed"

|

||||

"numShards": 4

|

||||

}

|

||||

```

|

||||

|

||||

|property|description|required?|

|

||||

|--------|-----------|---------|

|

||||

|

|

@ -177,6 +188,7 @@ To use this option, the indexer must be given a target partition size. It can th

|

|||

|targetPartitionSize|target number of rows to include in a partition, should be a number that targets segments of 700MB\~1GB.|yes|

|

||||

|partitionDimension|the dimension to partition on. Leave blank to select a dimension automatically.|no|

|

||||

|assumeGrouped|assume input data has already been grouped on time and dimensions. This is faster, but can choose suboptimal partitions if the assumption is violated.|no|

|

||||

|numShards|provides a way to manually override druid-auto sharding and specify the number of shards to create for each segment granular interval.It is only supported by hashed partitionSpec and targetPartitionSize must be set to -1|no|

|

||||

|

||||

### Updater job spec

|

||||

|

||||

|

|

|

|||

|

|

@ -81,7 +81,7 @@ druid.server.http.numThreads=50

|

|||

druid.request.logging.type=emitter

|

||||

druid.request.logging.feed=druid_requests

|

||||

|

||||

druid.monitoring.monitors=["com.metamx.metrics.SysMonitor","com.metamx.metrics.JvmMonitor", "io.druid.client.cache.CacheMonitor"]

|

||||

druid.monitoring.monitors=["com.metamx.metrics.SysMonitor","com.metamx.metrics.JvmMonitor"]

|

||||

|

||||

# Emit metrics over http

|

||||

druid.emitter=http

|

||||

|

|

@ -106,16 +106,16 @@ The broker module uses several of the default modules in [Configuration](Configu

|

|||

|

||||

|Property|Description|Default|

|

||||

|--------|-----------|-------|

|

||||

|`druid.broker.cache.sizeInBytes`|Maximum size of the cache. If this is zero, cache is disabled.|10485760 (10MB)|

|

||||

|`druid.broker.cache.initialSize`|The initial size of the cache in bytes.|500000|

|

||||

|`druid.broker.cache.logEvictionCount`|If this is non-zero, there will be an eviction of entries.|0|

|

||||

|`druid.broker.cache.sizeInBytes`|Maximum cache size in bytes. Zero disables caching.|0|

|

||||

|`druid.broker.cache.initialSize`|Initial size of the hashtable backing the cache.|500000|

|

||||

|`druid.broker.cache.logEvictionCount`|If non-zero, log cache eviction every `logEvictionCount` items.|0|

|

||||

|

||||

#### Memcache

|

||||

|

||||

|Property|Description|Default|

|

||||

|--------|-----------|-------|

|

||||

|`druid.broker.cache.expiration`|Memcache [expiration time ](https://code.google.com/p/memcached/wiki/NewCommands#Standard_Protocol).|2592000 (30 days)|

|

||||

|`druid.broker.cache.timeout`|Maximum time in milliseconds to wait for a response from Memcache.|500|

|

||||

|`druid.broker.cache.hosts`|Memcache hosts.|none|

|

||||

|`druid.broker.cache.maxObjectSize`|Maximum object size in bytes for a Memcache object.|52428800 (50 MB)|

|

||||

|`druid.broker.cache.memcachedPrefix`|Key prefix for all keys in Memcache.|druid|

|

||||

|`druid.broker.cache.expiration`|Memcached [expiration time](https://code.google.com/p/memcached/wiki/NewCommands#Standard_Protocol).|2592000 (30 days)|

|

||||

|`druid.broker.cache.timeout`|Maximum time in milliseconds to wait for a response from Memcached.|500|

|

||||

|`druid.broker.cache.hosts`|Command separated list of Memcached hosts `<host:port>`.|none|

|

||||

|`druid.broker.cache.maxObjectSize`|Maximum object size in bytes for a Memcached object.|52428800 (50 MB)|

|

||||

|`druid.broker.cache.memcachedPrefix`|Key prefix for all keys in Memcached.|druid|

|

||||

|

|

|

|||

|

|

@ -4,7 +4,7 @@ layout: doc_page

|

|||

|

||||

# Configuring Druid

|

||||

|

||||

This describes the basic server configuration that is loaded by all the server processes; the same file is loaded by all. See also the json "specFile" descriptions in [Realtime](Realtime.html) and [Batch-ingestion](Batch-ingestion.html).

|

||||

This describes the basic server configuration that is loaded by all Druid server processes; the same file is loaded by all. See also the JSON "specFile" descriptions in [Realtime](Realtime.html) and [Batch-ingestion](Batch-ingestion.html).

|

||||

|

||||

## JVM Configuration Best Practices

|

||||

|

||||

|

|

@ -26,7 +26,7 @@ Note: as a future item, we’d like to consolidate all of the various configurat

|

|||

|

||||

### Emitter Module

|

||||

|

||||

The Druid servers emit various metrics and alerts via something we call an Emitter. There are two emitter implementations included with the code, one that just logs to log4j and one that does POSTs of JSON events to a server. The properties for using the logging emitter are described below.

|

||||

The Druid servers emit various metrics and alerts via something we call an Emitter. There are two emitter implementations included with the code, one that just logs to log4j ("logging", which is used by default if no emitter is specified) and one that does POSTs of JSON events to a server ("http"). The properties for using the logging emitter are described below.

|

||||

|

||||

|Property|Description|Default|

|

||||

|--------|-----------|-------|

|

||||

|

|

@ -47,7 +47,7 @@ The Druid servers emit various metrics and alerts via something we call an Emitt

|

|||

|`druid.emitter.http.timeOut`|The timeout for data reads.|PT5M|

|

||||

|`druid.emitter.http.flushMillis`|How often to internal message buffer is flushed (data is sent).|60000|

|

||||

|`druid.emitter.http.flushCount`|How many messages can the internal message buffer hold before flushing (sending).|500|

|

||||

|`druid.emitter.http.recipientBaseUrl`|The base URL to emit messages to.|none|

|

||||

|`druid.emitter.http.recipientBaseUrl`|The base URL to emit messages to. Druid will POST JSON to be consumed at the HTTP endpoint specified by this property.|none|

|

||||

|

||||

### Http Client Module

|

||||

|

||||

|

|

@ -56,7 +56,7 @@ This is the HTTP client used by [Broker](Broker.html) nodes.

|

|||

|Property|Description|Default|

|

||||

|--------|-----------|-------|

|

||||

|`druid.broker.http.numConnections`|Size of connection pool for the Broker to connect to historical and real-time nodes. If there are more queries than this number that all need to speak to the same node, then they will queue up.|5|

|

||||

|`druid.broker.http.readTimeout`|The timeout for data reads.|none|

|

||||

|`druid.broker.http.readTimeout`|The timeout for data reads.|PT15M|

|

||||

|

||||

### Curator Module

|

||||

|

||||

|

|

@ -64,17 +64,17 @@ Druid uses [Curator](http://curator.incubator.apache.org/) for all [Zookeeper](h

|

|||

|

||||

|Property|Description|Default|

|

||||

|--------|-----------|-------|

|

||||

|`druid.zk.service.host`|The Zookeeper hosts to connect to.|none|

|

||||

|`druid.zk.service.sessionTimeoutMs`|Zookeeper session timeout.|30000|

|

||||

|`druid.zk.service.host`|The ZooKeeper hosts to connect to. This is a REQUIRED property and therefore a host address must be supplied.|none|

|

||||

|`druid.zk.service.sessionTimeoutMs`|ZooKeeper session timeout, in milliseconds.|30000|

|

||||

|`druid.curator.compress`|Boolean flag for whether or not created Znodes should be compressed.|false|

|

||||

|

||||

### Announcer Module

|

||||

|

||||

The announcer module is used to announce and unannounce Znodes in Zookeeper (using Curator).

|

||||

The announcer module is used to announce and unannounce Znodes in ZooKeeper (using Curator).

|

||||

|

||||

#### Zookeeper Paths

|

||||

#### ZooKeeper Paths

|

||||

|

||||

See [Zookeeper](Zookeeper.html).

|

||||

See [ZooKeeper](ZooKeeper.html).

|

||||

|

||||

#### Data Segment Announcer

|

||||

|

||||

|

|

@ -84,11 +84,11 @@ Data segment announcers are used to announce segments.

|

|||

|--------|-----------|-------|

|

||||

|`druid.announcer.type`|Choices: legacy or batch. The type of data segment announcer to use.|legacy|

|

||||

|

||||

#### Single Data Segment Announcer

|

||||

##### Single Data Segment Announcer

|

||||

|

||||

In legacy Druid, each segment served by a node would be announced as an individual Znode.

|

||||

|

||||

#### Batch Data Segment Announcer

|

||||

##### Batch Data Segment Announcer

|

||||

|

||||

In current Druid, multiple data segments may be announced under the same Znode.

|

||||

|

||||

|

|

@ -105,16 +105,8 @@ This module contains query processing functionality.

|

|||

|--------|-----------|-------|

|

||||

|`druid.processing.buffer.sizeBytes`|This specifies a buffer size for the storage of intermediate results. The computation engine in both the Historical and Realtime nodes will use a scratch buffer of this size to do all of their intermediate computations off-heap. Larger values allow for more aggregations in a single pass over the data while smaller values can require more passes depending on the query that is being executed.|1073741824 (1GB)|

|

||||

|`druid.processing.formatString`|Realtime and historical nodes use this format string to name their processing threads.|processing-%s|

|

||||

|`druid.processing.numThreads`|The number of processing threads to have available for parallel processing of segments. Our rule of thumb is `num_cores - 1`, this means that even under heavy load there will still be one core available to do background tasks like talking with ZK and pulling down segments.|1|

|

||||

|`druid.processing.numThreads`|The number of processing threads to have available for parallel processing of segments. Our rule of thumb is `num_cores - 1`, which means that even under heavy load there will still be one core available to do background tasks like talking with ZooKeeper and pulling down segments. If only one core is available, this property defaults to the value `1`.|Number of cores - 1 (or 1)|

|

||||

|

||||

### AWS Module

|

||||

|

||||

This module is used to interact with S3.

|

||||

|

||||

|Property|Description|Default|

|

||||

|--------|-----------|-------|

|

||||

|`druid.s3.accessKey`|The access key to use to access S3.|none|

|

||||

|`druid.s3.secretKey`|The secret key to use to access S3.|none|

|

||||

|

||||

### Metrics Module

|

||||

|

||||

|

|

@ -123,7 +115,15 @@ The metrics module is used to track Druid metrics.

|

|||

|Property|Description|Default|

|

||||

|--------|-----------|-------|

|

||||

|`druid.monitoring.emissionPeriod`|How often metrics are emitted.|PT1m|

|

||||

|`druid.monitoring.monitors`|List of Druid monitors.|none|

|

||||

|`druid.monitoring.monitors`|Sets list of Druid monitors used by a node. Each monitor is specified as `com.metamx.metrics.<monitor-name>` (see below for names and more information). For example, you can specify monitors for a Broker with `druid.monitoring.monitors=["com.metamx.metrics.SysMonitor","com.metamx.metrics.JvmMonitor"]`.|none (no monitors)|

|

||||

|

||||

The following monitors are available:

|

||||

|

||||

* CacheMonitor – Emits metrics (to logs) about the segment results cache for Historical and Broker nodes. Reports typical cache statistics include hits, misses, rates, and size (bytes and number of entries), as well as timeouts and and errors.

|

||||

* SysMonitor – This uses the [SIGAR library](http://www.hyperic.com/products/sigar) to report on various system activities and statuses.

|

||||

* ServerMonitor – Reports statistics on Historical nodes.

|

||||

* JvmMonitor – Reports JVM-related statistics.

|

||||

* RealtimeMetricsMonitor – Reports statistics on Realtime nodes.

|

||||

|

||||

### Server Module

|

||||

|

||||

|

|

@ -137,22 +137,24 @@ This module is used for Druid server nodes.

|

|||

|

||||

### Storage Node Module

|

||||

|

||||

This module is used by nodes that store data (historical and real-time nodes).

|

||||

This module is used by nodes that store data (Historical and Realtime).

|

||||

|

||||

|Property|Description|Default|

|

||||

|--------|-----------|-------|

|

||||

|`druid.server.maxSize`|The maximum number of bytes worth of segments that the node wants assigned to it. This is not a limit that the historical nodes actually enforce, they just publish it to the coordinator and trust the coordinator to do the right thing|0|

|

||||

|`druid.server.tier`|Druid server host port.|none|

|

||||

|`druid.server.maxSize`|The maximum number of bytes-worth of segments that the node wants assigned to it. This is not a limit that Historical nodes actually enforce, just a value published to the Coordinator node so it can plan accordingly.|0|

|

||||

|`druid.server.tier`| A string to name the distribution tier that the storage node belongs to. Many of the [rules Coordinator nodes use](Rule-Configuration.html) to manage segments can be keyed on tiers. | `_default_tier` |

|

||||

|`druid.server.priority`|In a tiered architecture, the priority of the tier, thus allowing control over which nodes are queried. Higher numbers mean higher priority. The default (no priority) works for architecture with no cross replication (tiers that have no data-storage overlap). Data centers typically have equal priority. | 0 |

|

||||

|

||||

|

||||

#### Segment Cache

|

||||

|

||||

Druid storage nodes maintain information about segments they have already downloaded.

|

||||

Druid storage nodes maintain information about segments they have already downloaded, and a disk cache to store that data.

|

||||

|

||||

|Property|Description|Default|

|

||||

|--------|-----------|-------|

|

||||

|`druid.segmentCache.locations`|Segments assigned to a historical node are first stored on the local file system and then served by the historical node. These locations define where that local cache resides|none|

|

||||

|`druid.segmentCache.locations`|Segments assigned to a Historical node are first stored on the local file system (in a disk cache) and then served by the Historical node. These locations define where that local cache resides. | none (no caching) |

|

||||

|`druid.segmentCache.deleteOnRemove`|Delete segment files from cache once a node is no longer serving a segment.|true|

|

||||

|`druid.segmentCache.infoDir`|Historical nodes keep track of the segments they are serving so that when the process is restarted they can reload the same segments without waiting for the coordinator to reassign. This path defines where this metadata is kept. Directory will be created if needed.|${first_location}/info_dir|

|

||||

|`druid.segmentCache.infoDir`|Historical nodes keep track of the segments they are serving so that when the process is restarted they can reload the same segments without waiting for the Coordinator to reassign. This path defines where this metadata is kept. Directory will be created if needed.|${first_location}/info_dir|

|

||||

|

||||

### Jetty Server Module

|

||||

|

||||

|

|

@ -193,7 +195,7 @@ This module is required by nodes that can serve queries.

|

|||

|

||||

|Property|Description|Default|

|

||||

|--------|-----------|-------|

|

||||

|`druid.query.chunkPeriod`|Long interval queries may be broken into shorter interval queries.|0|

|

||||

|`druid.query.chunkPeriod`|Long-interval queries (of any type) may be broken into shorter interval queries, reducing the impact on resources. Use ISO 8601 periods. For example, if this property is set to `P1M` (one month), then a query covering a year would be broken into 12 smaller queries. |0 (off)|

|

||||

|

||||

#### GroupBy Query Config

|

||||

|

||||

|

|

@ -210,17 +212,28 @@ This module is required by nodes that can serve queries.

|

|||

|--------|-----------|-------|

|

||||

|`druid.query.search.maxSearchLimit`|Maximum number of search results to return.|1000|

|

||||

|

||||

|

||||

### Discovery Module

|

||||

|

||||

The discovery module is used for service discovery.

|

||||

|

||||

|Property|Description|Default|

|

||||

|--------|-----------|-------|

|

||||

|`druid.discovery.curator.path`|Services announce themselves under this Zookeeper path.|/druid/discovery|

|

||||

|`druid.discovery.curator.path`|Services announce themselves under this ZooKeeper path.|/druid/discovery|

|

||||

|

||||

|

||||

#### Indexing Service Discovery Module

|

||||

|

||||

This module is used to find the [Indexing Service](Indexing-Service.html) using Curator service discovery.

|

||||

|

||||

|Property|Description|Default|

|

||||

|--------|-----------|-------|

|

||||

|`druid.selectors.indexing.serviceName`|The druid.service name of the indexing service Overlord node. To start the Overlord with a different name, set it with this property. |overlord|

|

||||

|

||||

|

||||

### Server Inventory View Module

|

||||

|

||||

This module is used to read announcements of segments in Zookeeper. The configs are identical to the Announcer Module.

|

||||

This module is used to read announcements of segments in ZooKeeper. The configs are identical to the Announcer Module.

|

||||

|

||||

### Database Connector Module

|

||||

|

||||

|

|

@ -228,7 +241,6 @@ These properties specify the jdbc connection and other configuration around the

|

|||

|

||||

|Property|Description|Default|

|

||||

|--------|-----------|-------|

|

||||

|`druid.db.connector.pollDuration`|The jdbc connection URI.|none|

|

||||

|`druid.db.connector.user`|The username to connect with.|none|

|

||||

|`druid.db.connector.password`|The password to connect with.|none|

|

||||

|`druid.db.connector.createTables`|If Druid requires a table and it doesn't exist, create it?|true|

|

||||

|

|

@ -250,13 +262,6 @@ The Jackson Config manager reads and writes config entries from the Druid config

|

|||

|--------|-----------|-------|

|

||||

|`druid.manager.config.pollDuration`|How often the manager polls the config table for updates.|PT1m|

|

||||

|

||||

### Indexing Service Discovery Module

|

||||

|

||||

This module is used to find the [Indexing Service](Indexing-Service.html) using Curator service discovery.

|

||||

|

||||

|Property|Description|Default|

|

||||

|--------|-----------|-------|

|

||||

|`druid.selectors.indexing.serviceName`|The druid.service name of the indexing service Overlord node.|none|

|

||||

|

||||

### DataSegment Pusher/Puller Module

|

||||

|

||||

|

|

@ -290,6 +295,16 @@ This deep storage is used to interface with Amazon's S3.

|

|||

|`druid.storage.archiveBucket`|S3 bucket name for archiving when running the indexing-service *archive task*.|none|

|

||||

|`druid.storage.archiveBaseKey`|S3 object key prefix for archiving.|none|

|

||||

|

||||

#### AWS Module

|

||||

|

||||

This module is used to interact with S3.

|

||||

|

||||

|Property|Description|Default|

|

||||

|--------|-----------|-------|

|

||||

|`druid.s3.accessKey`|The access key to use to access S3.|none|

|

||||

|`druid.s3.secretKey`|The secret key to use to access S3.|none|

|

||||

|

||||

|

||||

#### HDFS Deep Storage

|

||||

|

||||

This deep storage is used to interface with HDFS.

|

||||

|

|

|

|||

|

|

@ -19,13 +19,13 @@ Clone Druid and build it:

|

|||

git clone https://github.com/metamx/druid.git druid

|

||||

cd druid

|

||||

git fetch --tags

|

||||

git checkout druid-0.6.81

|

||||

git checkout druid-0.6.101

|

||||

./build.sh

|

||||

```

|

||||

|

||||

### Downloading the DSK (Druid Standalone Kit)

|

||||

|

||||

[Download](http://static.druid.io/artifacts/releases/druid-services-0.6.81-bin.tar.gz) a stand-alone tarball and run it:

|

||||

[Download](http://static.druid.io/artifacts/releases/druid-services-0.6.101-bin.tar.gz) a stand-alone tarball and run it:

|

||||

|

||||

``` bash

|

||||

tar -xzf druid-services-0.X.X-bin.tar.gz

|

||||

|

|

|

|||

|

|

@ -66,7 +66,7 @@ druid.host=#{IP_ADDR}:8080

|

|||

druid.port=8080

|

||||

druid.service=druid/prod/indexer

|

||||

|

||||

druid.extensions.coordinates=["io.druid.extensions:druid-s3-extensions:0.6.81"]

|

||||

druid.extensions.coordinates=["io.druid.extensions:druid-s3-extensions:0.6.101"]

|

||||

|

||||

druid.zk.service.host=#{ZK_IPs}

|

||||

druid.zk.paths.base=/druid/prod

|

||||

|

|

@ -115,7 +115,7 @@ druid.host=#{IP_ADDR}:8080

|

|||

druid.port=8080

|

||||

druid.service=druid/prod/worker

|

||||

|

||||

druid.extensions.coordinates=["io.druid.extensions:druid-s3-extensions:0.6.81","io.druid.extensions:druid-kafka-seven:0.6.81"]

|

||||

druid.extensions.coordinates=["io.druid.extensions:druid-s3-extensions:0.6.101","io.druid.extensions:druid-kafka-seven:0.6.101"]

|

||||

|

||||

druid.zk.service.host=#{ZK_IPs}

|

||||

druid.zk.paths.base=/druid/prod

|

||||

|

|

|

|||

|

|

@ -27,7 +27,7 @@ druid.host=localhost

|

|||

druid.service=realtime

|

||||

druid.port=8083

|

||||

|

||||

druid.extensions.coordinates=["io.druid.extensions:druid-kafka-seven:0.6.81"]

|

||||

druid.extensions.coordinates=["io.druid.extensions:druid-kafka-seven:0.6.101"]

|

||||

|

||||

|

||||

druid.zk.service.host=localhost

|

||||

|

|

@ -76,7 +76,7 @@ druid.host=#{IP_ADDR}:8080

|

|||

druid.port=8080

|

||||

druid.service=druid/prod/realtime

|

||||

|

||||

druid.extensions.coordinates=["io.druid.extensions:druid-s3-extensions:0.6.81","io.druid.extensions:druid-kafka-seven:0.6.81"]

|

||||

druid.extensions.coordinates=["io.druid.extensions:druid-s3-extensions:0.6.101","io.druid.extensions:druid-kafka-seven:0.6.101"]

|

||||

|

||||

druid.zk.service.host=#{ZK_IPs}

|

||||

druid.zk.paths.base=/druid/prod

|

||||

|

|

|

|||

|

|

@ -0,0 +1,142 @@

|

|||

---

|

||||

layout: doc_page

|

||||

---

|

||||

# Select Queries

|

||||

Select queries return raw Druid rows and support pagination.

|

||||

|

||||

```json

|

||||

{

|

||||

"queryType": "select",

|

||||

"dataSource": "wikipedia",

|

||||

"dimensions":[],

|

||||

"metrics":[],

|

||||

"granularity": "all",

|

||||

"intervals": [

|

||||

"2013-01-01/2013-01-02"

|

||||

],

|

||||

"pagingSpec":{"pagingIdentifiers": {}, "threshold":5}

|

||||

}

|

||||

```

|

||||

|

||||

There are several main parts to a select query:

|

||||

|

||||

|property|description|required?|

|

||||

|--------|-----------|---------|

|

||||

|queryType|This String should always be "select"; this is the first thing Druid looks at to figure out how to interpret the query|yes|

|

||||

|dataSource|A String defining the data source to query, very similar to a table in a relational database|yes|

|

||||

|intervals|A JSON Object representing ISO-8601 Intervals. This defines the time ranges to run the query over.|yes|

|

||||

|dimensions|The list of dimensions to select. If left empty, all dimensions are returned.|no|

|

||||

|metrics|The list of metrics to select. If left empty, all metrics are returned.|no|

|

||||

|pagingSpec|A JSON object indicating offsets into different scanned segments. Select query results will return a pagingSpec that can be reused for pagination.|yes|

|

||||

|context|An additional JSON Object which can be used to specify certain flags.|no|

|

||||

|

||||

The format of the result is:

|

||||

|

||||

```json

|

||||

[{

|

||||

"timestamp" : "2013-01-01T00:00:00.000Z",

|

||||

"result" : {

|

||||

"pagingIdentifiers" : {

|

||||

"wikipedia_2012-12-29T00:00:00.000Z_2013-01-10T08:00:00.000Z_2013-01-10T08:13:47.830Z_v9" : 4

|

||||

},

|

||||

"events" : [ {

|

||||

"segmentId" : "wikipedia_editstream_2012-12-29T00:00:00.000Z_2013-01-10T08:00:00.000Z_2013-01-10T08:13:47.830Z_v9",

|

||||

"offset" : 0,

|

||||

"event" : {

|

||||

"timestamp" : "2013-01-01T00:00:00.000Z",

|

||||

"robot" : "1",

|

||||

"namespace" : "article",

|

||||

"anonymous" : "0",

|

||||

"unpatrolled" : "0",

|

||||

"page" : "11._korpus_(NOVJ)",

|

||||

"language" : "sl",

|

||||

"newpage" : "0",

|

||||

"user" : "EmausBot",

|

||||

"count" : 1.0,

|

||||

"added" : 39.0,

|

||||

"delta" : 39.0,

|

||||

"variation" : 39.0,

|

||||

"deleted" : 0.0

|

||||

}

|

||||

}, {

|

||||

"segmentId" : "wikipedia_2012-12-29T00:00:00.000Z_2013-01-10T08:00:00.000Z_2013-01-10T08:13:47.830Z_v9",

|

||||

"offset" : 1,

|

||||

"event" : {

|

||||

"timestamp" : "2013-01-01T00:00:00.000Z",

|

||||

"robot" : "0",

|

||||

"namespace" : "article",

|

||||

"anonymous" : "0",

|

||||

"unpatrolled" : "0",

|

||||

"page" : "112_U.S._580",

|

||||

"language" : "en",

|

||||

"newpage" : "1",

|

||||

"user" : "MZMcBride",

|

||||

"count" : 1.0,

|

||||

"added" : 70.0,

|

||||

"delta" : 70.0,

|

||||

"variation" : 70.0,

|

||||

"deleted" : 0.0

|

||||

}

|

||||

}, {

|

||||

"segmentId" : "wikipedia_2012-12-29T00:00:00.000Z_2013-01-10T08:00:00.000Z_2013-01-10T08:13:47.830Z_v9",

|

||||

"offset" : 2,

|

||||

"event" : {

|

||||

"timestamp" : "2013-01-01T00:00:00.000Z",

|

||||

"robot" : "0",

|

||||

"namespace" : "article",

|

||||

"anonymous" : "0",

|

||||

"unpatrolled" : "0",

|

||||

"page" : "113_U.S._243",

|

||||

"language" : "en",

|

||||

"newpage" : "1",

|

||||

"user" : "MZMcBride",

|

||||

"count" : 1.0,

|

||||

"added" : 77.0,

|

||||

"delta" : 77.0,

|

||||

"variation" : 77.0,

|

||||

"deleted" : 0.0

|

||||

}

|

||||

}, {

|

||||

"segmentId" : "wikipedia_2012-12-29T00:00:00.000Z_2013-01-10T08:00:00.000Z_2013-01-10T08:13:47.830Z_v9",

|

||||

"offset" : 3,

|

||||

"event" : {

|

||||

"timestamp" : "2013-01-01T00:00:00.000Z",

|

||||

"robot" : "0",

|

||||

"namespace" : "article",

|

||||

"anonymous" : "0",

|

||||

"unpatrolled" : "0",

|

||||

"page" : "113_U.S._73",

|

||||

"language" : "en",

|

||||

"newpage" : "1",

|

||||

"user" : "MZMcBride",

|

||||

"count" : 1.0,

|

||||

"added" : 70.0,

|

||||

"delta" : 70.0,

|

||||

"variation" : 70.0,

|

||||

"deleted" : 0.0

|

||||

}

|

||||

}, {

|

||||

"segmentId" : "wikipedia_2012-12-29T00:00:00.000Z_2013-01-10T08:00:00.000Z_2013-01-10T08:13:47.830Z_v9",

|

||||

"offset" : 4,

|

||||

"event" : {

|

||||

"timestamp" : "2013-01-01T00:00:00.000Z",

|

||||

"robot" : "0",

|

||||

"namespace" : "article",

|

||||

"anonymous" : "0",

|

||||

"unpatrolled" : "0",

|

||||

"page" : "113_U.S._756",

|

||||

"language" : "en",

|

||||

"newpage" : "1",

|

||||

"user" : "MZMcBride",

|

||||

"count" : 1.0,

|

||||

"added" : 68.0,

|

||||

"delta" : 68.0,

|

||||

"variation" : 68.0,

|

||||

"deleted" : 0.0

|

||||

}

|

||||

} ]

|

||||

}

|

||||

} ]

|

||||

```

|

||||

|

||||

The result returns a global pagingSpec that can be reused for the next select query. The offset will need to be increased by 1 on the client side.

|

||||

|

|

@ -51,12 +51,12 @@ The Index Task is a simpler variation of the Index Hadoop task that is designed

|

|||

|--------|-----------|---------|

|

||||

|type|The task type, this should always be "index".|yes|

|

||||

|id|The task ID. If this is not explicitly specified, Druid generates the task ID using the name of the task file and date-time stamp. |no|

|

||||

|granularitySpec|Specifies the segment chunks that the task will process. `type` is always "uniform"; `gran` sets the granularity of the chunks ("DAY" means all segments containing timestamps in the same day, while `intervals` sets the interval that the chunks will cover.|yes|

|

||||

|granularitySpec|Specifies the segment chunks that the task will process. `type` is always "uniform"; `gran` sets the granularity of the chunks ("DAY" means all segments containing timestamps in the same day), while `intervals` sets the interval that the chunks will cover.|yes|

|

||||

|spatialDimensions|Dimensions to build spatial indexes over. See [Geographic Queries](GeographicQueries.html).|no|

|

||||

|aggregators|The metrics to aggregate in the data set. For more info, see [Aggregations](Aggregations.html)|yes|

|

||||

|aggregators|The metrics to aggregate in the data set. For more info, see [Aggregations](Aggregations.html).|yes|

|

||||

|indexGranularity|The rollup granularity for timestamps. See [Realtime Ingestion](Realtime-ingestion.html) for more information. |no|

|

||||

|targetPartitionSize|Used in sharding. Determines how many rows are in each segment.|no|

|

||||

|firehose|The input source of data. For more info, see [Firehose](Firehose.html)|yes|

|

||||

|firehose|The input source of data. For more info, see [Firehose](Firehose.html).|yes|

|

||||

|rowFlushBoundary|Used in determining when intermediate persist should occur to disk.|no|

|

||||

|

||||

### Index Hadoop Task

|

||||

|

|

@ -74,14 +74,14 @@ The Hadoop Index Task is used to index larger data sets that require the paralle

|

|||

|--------|-----------|---------|

|

||||

|type|The task type, this should always be "index_hadoop".|yes|

|

||||

|config|A Hadoop Index Config. See [Batch Ingestion](Batch-ingestion.html)|yes|

|

||||

|hadoopCoordinates|The Maven \<groupId\>:\<artifactId\>:\<version\> of Hadoop to use. The default is "org.apache.hadoop:hadoop-core:1.0.3".|no|

|

||||

|hadoopCoordinates|The Maven \<groupId\>:\<artifactId\>:\<version\> of Hadoop to use. The default is "org.apache.hadoop:hadoop-client:2.3.0".|no|

|

||||

|

||||

|

||||

The Hadoop Index Config submitted as part of an Hadoop Index Task is identical to the Hadoop Index Config used by the `HadoopBatchIndexer` except that three fields must be omitted: `segmentOutputPath`, `workingPath`, `updaterJobSpec`. The Indexing Service takes care of setting these fields internally.

|

||||

|

||||

#### Using your own Hadoop distribution

|

||||

|

||||

Druid is compiled against Apache hadoop-core 1.0.3. However, if you happen to use a different flavor of hadoop that is API compatible with hadoop-core 1.0.3, you should only have to change the hadoopCoordinates property to point to the maven artifact used by your distribution.

|

||||

Druid is compiled against Apache hadoop-client 2.3.0. However, if you happen to use a different flavor of hadoop that is API compatible with hadoop-client 2.3.0, you should only have to change the hadoopCoordinates property to point to the maven artifact used by your distribution.

|

||||

|

||||

#### Resolving dependency conflicts running HadoopIndexTask

|

||||

|

||||

|

|

|

|||

|

|

@ -49,7 +49,7 @@ There are two ways to setup Druid: download a tarball, or [Build From Source](Bu

|

|||

|

||||

### Download a Tarball

|

||||

|

||||

We've built a tarball that contains everything you'll need. You'll find it [here](http://static.druid.io/artifacts/releases/druid-services-0.6.81-bin.tar.gz). Download this file to a directory of your choosing.

|

||||

We've built a tarball that contains everything you'll need. You'll find it [here](http://static.druid.io/artifacts/releases/druid-services-0.6.101-bin.tar.gz). Download this file to a directory of your choosing.

|

||||

|

||||

You can extract the awesomeness within by issuing:

|

||||

|

||||

|

|

@ -60,7 +60,7 @@ tar -zxvf druid-services-*-bin.tar.gz

|

|||

Not too lost so far right? That's great! If you cd into the directory:

|

||||

|

||||

```

|

||||

cd druid-services-0.6.81

|

||||

cd druid-services-0.6.101

|

||||

```

|

||||

|

||||

You should see a bunch of files:

|

||||

|

|

|

|||

|

|

@ -42,7 +42,7 @@ Metrics (things to aggregate over):

|

|||

Setting Up

|

||||

----------

|

||||

|

||||

At this point, you should already have Druid downloaded and are comfortable with running a Druid cluster locally. If you are not, see [here](Tutiroal%3A-The-Druid-Cluster.html).

|

||||

At this point, you should already have Druid downloaded and are comfortable with running a Druid cluster locally. If you are not, see [here](Tutorial%3A-The-Druid-Cluster.html).

|

||||

|

||||

Let's start from our usual starting point in the tarball directory.

|

||||

|

||||

|

|

@ -136,7 +136,7 @@ Indexing the Data

|

|||

To index the data and build a Druid segment, we are going to need to submit a task to the indexing service. This task should already exist:

|

||||

|

||||

```

|

||||

examples/indexing/index_task.json

|

||||

examples/indexing/wikipedia_index_task.json

|

||||

```

|

||||

|

||||

Open up the file to see the following:

|

||||

|

|

|

|||

|

|

@ -13,7 +13,7 @@ In this tutorial, we will set up other types of Druid nodes and external depende

|

|||

|

||||

If you followed the first tutorial, you should already have Druid downloaded. If not, let's go back and do that first.

|

||||

|

||||

You can download the latest version of druid [here](http://static.druid.io/artifacts/releases/druid-services-0.6.81-bin.tar.gz)

|

||||

You can download the latest version of druid [here](http://static.druid.io/artifacts/releases/druid-services-0.6.101-bin.tar.gz)

|

||||

|

||||

and untar the contents within by issuing:

|

||||

|

||||

|

|

@ -149,7 +149,7 @@ druid.port=8081

|

|||

|

||||

druid.zk.service.host=localhost

|

||||

|

||||

druid.extensions.coordinates=["io.druid.extensions:druid-s3-extensions:0.6.81"]

|

||||

druid.extensions.coordinates=["io.druid.extensions:druid-s3-extensions:0.6.101"]

|

||||

|

||||

# Dummy read only AWS account (used to download example data)

|

||||

druid.s3.secretKey=QyyfVZ7llSiRg6Qcrql1eEUG7buFpAK6T6engr1b

|

||||

|

|

@ -240,7 +240,7 @@ druid.port=8083

|

|||

|

||||

druid.zk.service.host=localhost

|

||||

|

||||

druid.extensions.coordinates=["io.druid.extensions:druid-examples:0.6.81","io.druid.extensions:druid-kafka-seven:0.6.81"]

|

||||

druid.extensions.coordinates=["io.druid.extensions:druid-examples:0.6.101","io.druid.extensions:druid-kafka-seven:0.6.101"]

|

||||

|

||||

# Change this config to db to hand off to the rest of the Druid cluster

|

||||

druid.publish.type=noop

|

||||

|

|

|

|||

|

|

@ -37,7 +37,7 @@ There are two ways to setup Druid: download a tarball, or [Build From Source](Bu

|

|||

|

||||

h3. Download a Tarball

|

||||

|

||||

We've built a tarball that contains everything you'll need. You'll find it [here](http://static.druid.io/artifacts/releases/druid-services-0.6.81-bin.tar.gz)

|

||||

We've built a tarball that contains everything you'll need. You'll find it [here](http://static.druid.io/artifacts/releases/druid-services-0.6.101-bin.tar.gz)

|

||||

Download this file to a directory of your choosing.

|

||||

You can extract the awesomeness within by issuing:

|

||||

|

||||

|

|

@ -48,7 +48,7 @@ tar zxvf druid-services-*-bin.tar.gz

|

|||

Not too lost so far right? That's great! If you cd into the directory:

|

||||

|

||||

```

|

||||

cd druid-services-0.6.81

|

||||

cd druid-services-0.6.101

|

||||

```

|

||||

|

||||

You should see a bunch of files:

|

||||

|

|

|

|||

|

|

@ -1,77 +1,93 @@

|

|||

---

|

||||

layout: doc_page

|

||||

---

|

||||

Greetings! We see you've taken an interest in Druid. That's awesome! Hopefully this tutorial will help clarify some core Druid concepts. We will go through one of the Real-time "Examples":Examples.html, and issue some basic Druid queries. The data source we'll be working with is the "Twitter spritzer stream":https://dev.twitter.com/docs/streaming-apis/streams/public. If you are ready to explore Druid, brave its challenges, and maybe learn a thing or two, read on!

|

||||

Greetings! We see you've taken an interest in Druid. That's awesome! Hopefully this tutorial will help clarify some core Druid concepts. We will go through one of the Real-time [Examples](Examples.html), and issue some basic Druid queries. The data source we'll be working with is the [Twitter spritzer stream](https://dev.twitter.com/docs/streaming-apis/streams/public). If you are ready to explore Druid, brave its challenges, and maybe learn a thing or two, read on!

|

||||

|

||||

h2. Setting Up

|

||||

# Setting Up

|

||||

|

||||

There are two ways to setup Druid: download a tarball, or build it from source.

|

||||

|

||||

h3. Download a Tarball

|

||||

# Download a Tarball

|

||||

|

||||

We've built a tarball that contains everything you'll need. You'll find it "here":http://static.druid.io/artifacts/releases/druid-services-0.6.81-bin.tar.gz.

|

||||

We've built a tarball that contains everything you'll need. You'll find it [here](http://static.druid.io/artifacts/releases/druid-services-0.6.101-bin.tar.gz).

|

||||

Download this bad boy to a directory of your choosing.

|

||||

|

||||

You can extract the awesomeness within by issuing:

|

||||

|

||||

pre. tar -zxvf druid-services-0.X.X.tar.gz

|

||||

```

|

||||

tar -zxvf druid-services-0.X.X.tar.gz

|

||||

```

|

||||

|

||||

Not too lost so far right? That's great! If you cd into the directory:

|

||||

|

||||

pre. cd druid-services-0.X.X

|

||||

```

|

||||

cd druid-services-0.X.X

|

||||

```

|

||||

|

||||

You should see a bunch of files:

|

||||

|

||||

* run_example_server.sh

|

||||

* run_example_client.sh

|

||||

* LICENSE, config, examples, lib directories

|

||||

|

||||

h3. Clone and Build from Source

|

||||

# Clone and Build from Source

|

||||

|

||||

The other way to setup Druid is from source via git. To do so, run these commands:

|

||||

|

||||

<pre><code>git clone git@github.com:metamx/druid.git

|

||||

```

|

||||

git clone git@github.com:metamx/druid.git

|

||||

cd druid

|

||||

git checkout druid-0.X.X

|

||||

./build.sh

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

You should see a bunch of files:

|

||||

|

||||

<pre><code>DruidCorporateCLA.pdf README common examples indexer pom.xml server

|

||||

```

|

||||

DruidCorporateCLA.pdf README common examples indexer pom.xml server

|

||||

DruidIndividualCLA.pdf build.sh doc group_by.body install publications services

|

||||

LICENSE client eclipse_formatting.xml index-common merger realtime

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

You can find the example executables in the examples/bin directory:

|

||||

|

||||

* run_example_server.sh

|

||||

* run_example_client.sh

|

||||

|

||||

h2. Running Example Scripts

|

||||

# Running Example Scripts

|

||||

|

||||

Let's start doing stuff. You can start a Druid "Realtime":Realtime.html node by issuing:

|

||||

<code>./run_example_server.sh</code>

|

||||

Let's start doing stuff. You can start a Druid [Realtime](Realtime.html) node by issuing:

|

||||

|

||||

```

|

||||

./run_example_server.sh

|

||||

```

|

||||

|

||||

Select "twitter".

|

||||

|

||||

You'll need to register a new application with the twitter API, which only takes a minute. Go to "https://twitter.com/oauth_clients/new":https://twitter.com/oauth_clients/new and fill out the form and submit. Don't worry, the home page and callback url can be anything. This will generate keys for the Twitter example application. Take note of the values for consumer key/secret and access token/secret.

|

||||

You'll need to register a new application with the twitter API, which only takes a minute. Go to [this link](https://twitter.com/oauth_clients/new":https://twitter.com/oauth_clients/new) and fill out the form and submit. Don't worry, the home page and callback url can be anything. This will generate keys for the Twitter example application. Take note of the values for consumer key/secret and access token/secret.

|

||||

|

||||

Enter your credentials when prompted.

|

||||

|

||||

Once the node starts up you will see a bunch of logs about setting up properties and connecting to the data source. If everything was successful, you should see messages of the form shown below. If you see crazy exceptions, you probably typed in your login information incorrectly.

|

||||

<pre><code>2013-05-17 23:04:40,934 INFO [main] org.mortbay.log - Started SelectChannelConnector@0.0.0.0:8080

|

||||

|

||||

```

|

||||

2013-05-17 23:04:40,934 INFO [main] org.mortbay.log - Started SelectChannelConnector@0.0.0.0:8080

|

||||

2013-05-17 23:04:40,935 INFO [main] com.metamx.common.lifecycle.Lifecycle$AnnotationBasedHandler - Invoking start method[public void com.metamx.druid.http.FileRequestLogger.start()] on object[com.metamx.druid.http.FileRequestLogger@42bb0406].

|

||||

2013-05-17 23:04:41,578 INFO [Twitter Stream consumer-1[Establishing connection]] twitter4j.TwitterStreamImpl - Connection established.

|

||||

2013-05-17 23:04:41,578 INFO [Twitter Stream consumer-1[Establishing connection]] io.druid.examples.twitter.TwitterSpritzerFirehoseFactory - Connected_to_Twitter

|

||||

2013-05-17 23:04:41,578 INFO [Twitter Stream consumer-1[Establishing connection]] twitter4j.TwitterStreamImpl - Receiving status stream.

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

Periodically, you'll also see messages of the form:

|

||||

<pre><code>2013-05-17 23:04:59,793 INFO [chief-twitterstream] io.druid.examples.twitter.TwitterSpritzerFirehoseFactory - nextRow() has returned 1,000 InputRows

|

||||

</code></pre>

|

||||

|

||||

```

|

||||

2013-05-17 23:04:59,793 INFO [chief-twitterstream] io.druid.examples.twitter.TwitterSpritzerFirehoseFactory - nextRow() has returned 1,000 InputRows

|

||||

```

|

||||

|

||||

These messages indicate you are ingesting events. The Druid real time-node ingests events in an in-memory buffer. Periodically, these events will be persisted to disk. Persisting to disk generates a whole bunch of logs:

|

||||

|

||||

<pre><code>2013-05-17 23:06:40,918 INFO [chief-twitterstream] com.metamx.druid.realtime.plumber.RealtimePlumberSchool - Submitting persist runnable for dataSource[twitterstream]

|

||||

```

|

||||

2013-05-17 23:06:40,918 INFO [chief-twitterstream] com.metamx.druid.realtime.plumber.RealtimePlumberSchool - Submitting persist runnable for dataSource[twitterstream]

|

||||

2013-05-17 23:06:40,920 INFO [twitterstream-incremental-persist] com.metamx.druid.realtime.plumber.RealtimePlumberSchool - DataSource[twitterstream], Interval[2013-05-17T23:00:00.000Z/2013-05-18T00:00:00.000Z], persisting Hydrant[FireHydrant{index=com.metamx.druid.index.v1.IncrementalIndex@126212dd, queryable=com.metamx.druid.index.IncrementalIndexSegment@64c47498, count=0}]

|

||||

2013-05-17 23:06:40,937 INFO [twitterstream-incremental-persist] com.metamx.druid.index.v1.IndexMerger - Starting persist for interval[2013-05-17T23:00:00.000Z/2013-05-17T23:07:00.000Z], rows[4,666]

|

||||

2013-05-17 23:06:41,039 INFO [twitterstream-incremental-persist] com.metamx.druid.index.v1.IndexMerger - outDir[/tmp/example/twitter_realtime/basePersist/twitterstream/2013-05-17T23:00:00.000Z_2013-05-18T00:00:00.000Z/0/v8-tmp] completed index.drd in 11 millis.

|

||||

|

|

@ -88,16 +104,20 @@ These messages indicate you are ingesting events. The Druid real time-node inges

|

|||

2013-05-17 23:06:41,425 INFO [twitterstream-incremental-persist] com.metamx.druid.index.v1.IndexIO$DefaultIndexIOHandler - Converting v8[/tmp/example/twitter_realtime/basePersist/twitterstream/2013-05-17T23:00:00.000Z_2013-05-18T00:00:00.000Z/0/v8-tmp] to v9[/tmp/example/twitter_realtime/basePersist/twitterstream/2013-05-17T23:00:00.000Z_2013-05-18T00:00:00.000Z/0]

|

||||

2013-05-17 23:06:41,426 INFO [twitterstream-incremental-persist]

|

||||

... ETC

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

The logs are about building different columns, probably not the most exciting stuff (they might as well be in Vulcan) if are you learning about Druid for the first time. Nevertheless, if you are interested in the details of our real-time architecture and why we persist indexes to disk, I suggest you read our "White Paper":http://static.druid.io/docs/druid.pdf.

|

||||

|

||||

Okay, things are about to get real (-time). To query the real-time node you've spun up, you can issue:

|

||||

<pre>./run_example_client.sh</pre>

|

||||

|

||||

Select "twitter" once again. This script issues ["GroupByQuery":GroupByQuery.html]s to the twitter data we've been ingesting. The query looks like this:

|

||||

```

|

||||

./run_example_client.sh

|

||||

```

|

||||

|

||||

<pre><code>{

|

||||

Select "twitter" once again. This script issues [GroupByQueries](GroupByQuery.html) to the twitter data we've been ingesting. The query looks like this:

|

||||

|

||||

```json

|

||||

{

|

||||

"queryType": "groupBy",

|

||||

"dataSource": "twitterstream",

|

||||

"granularity": "all",

|

||||

|

|

@ -109,13 +129,14 @@ Select "twitter" once again. This script issues ["GroupByQuery":GroupByQuery.htm

|

|||

"filter": { "type": "selector", "dimension": "lang", "value": "en" },

|

||||

"intervals":["2012-10-01T00:00/2020-01-01T00"]

|

||||

}

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

This is a **groupBy** query, which you may be familiar with from SQL. We are grouping, or aggregating, via the **dimensions** field: ["lang", "utc_offset"]. We are **filtering** via the **"lang"** dimension, to only look at english tweets. Our **aggregations** are what we are calculating: a row count, and the sum of the tweets in our data.

|

||||

|

||||

The result looks something like this:

|

||||

|

||||

<pre><code>[

|

||||

```json

|

||||

[

|

||||

{

|

||||

"version": "v1",

|

||||

"timestamp": "2012-10-01T00:00:00.000Z",

|

||||

|

|

@ -137,41 +158,48 @@ The result looks something like this:

|

|||

}

|

||||

},

|

||||

...

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

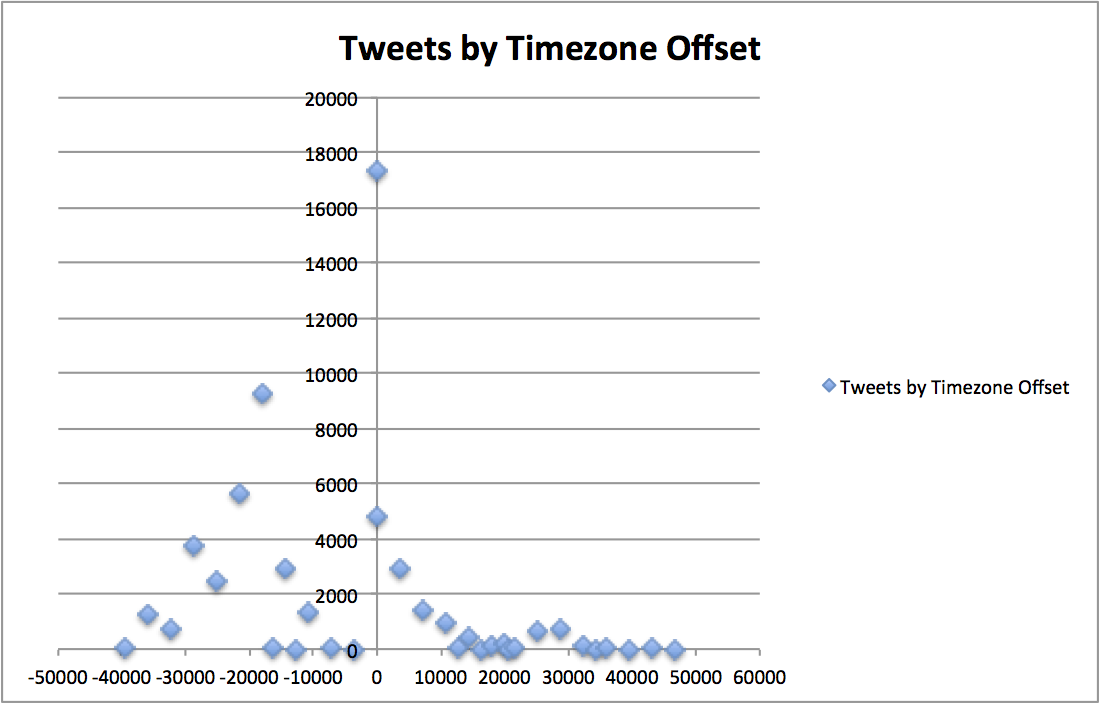

This data, plotted in a time series/distribution, looks something like this:

|

||||

|

||||

!http://metamarkets.com/wp-content/uploads/2013/06/tweets_timezone_offset.png(Timezone / Tweets Scatter Plot)!

|

||||

|

||||

|

||||

This groupBy query is a bit complicated and we'll return to it later. For the time being, just make sure you are getting some blocks of data back. If you are having problems, make sure you have "curl":http://curl.haxx.se/ installed. Control+C to break out of the client script.

|

||||

This groupBy query is a bit complicated and we'll return to it later. For the time being, just make sure you are getting some blocks of data back. If you are having problems, make sure you have [curl](http://curl.haxx.se/) installed. Control+C to break out of the client script.

|

||||

|

||||

h2. Querying Druid

|

||||

# Querying Druid

|

||||

|

||||

In your favorite editor, create the file:

|

||||

<pre>time_boundary_query.body</pre>

|

||||

|

||||

```

|

||||

time_boundary_query.body

|

||||

```

|

||||

|

||||

Druid queries are JSON blobs which are relatively painless to create programmatically, but an absolute pain to write by hand. So anyway, we are going to create a Druid query by hand. Add the following to the file you just created:

|

||||

<pre><code>{

|

||||

|

||||

```json

|

||||

{

|

||||

"queryType" : "timeBoundary",

|

||||

"dataSource" : "twitterstream"

|

||||

}

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

The "TimeBoundaryQuery":TimeBoundaryQuery.html is one of the simplest Druid queries. To run the query, you can issue:

|

||||

<pre><code>

|

||||

|

||||

```

|

||||

curl -X POST 'http://localhost:8080/druid/v2/?pretty' -H 'content-type: application/json' -d @time_boundary_query.body

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

We get something like this JSON back:

|

||||

|

||||

<pre><code>[ {

|

||||

```json

|

||||

{

|

||||

"timestamp" : "2013-06-10T19:09:00.000Z",

|

||||

"result" : {

|

||||

"minTime" : "2013-06-10T19:09:00.000Z",

|

||||

"maxTime" : "2013-06-10T20:50:00.000Z"

|

||||

}

|

||||

} ]

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

That's the result. What information do you think the result is conveying?

|

||||

...

|

||||

|

|

@ -179,11 +207,14 @@ If you said the result is indicating the maximum and minimum timestamps we've se

|

|||

|

||||

Return to your favorite editor and create the file:

|

||||

|

||||

<pre>timeseries_query.body</pre>

|

||||

```

|

||||

timeseries_query.body

|

||||

```

|

||||

|

||||

We are going to make a slightly more complicated query, the "TimeseriesQuery":TimeseriesQuery.html. Copy and paste the following into the file:

|

||||

We are going to make a slightly more complicated query, the [TimeseriesQuery](TimeseriesQuery.html). Copy and paste the following into the file:

|

||||

|

||||

<pre><code>{

|

||||

```json

|

||||

{

|

||||

"queryType":"timeseries",

|

||||

"dataSource":"twitterstream",

|

||||

"intervals":["2010-01-01/2020-01-01"],

|

||||

|

|

@ -193,22 +224,26 @@ We are going to make a slightly more complicated query, the "TimeseriesQuery":Ti

|

|||

{ "type": "doubleSum", "fieldName": "tweets", "name": "tweets"}

|

||||

]

|

||||

}

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

You are probably wondering, what are these "Granularities":Granularities.html and "Aggregations":Aggregations.html things? What the query is doing is aggregating some metrics over some span of time.

|

||||

You are probably wondering, what are these [Granularities](Granularities.html) and [Aggregations](Aggregations.html) things? What the query is doing is aggregating some metrics over some span of time.

|

||||

To issue the query and get some results, run the following in your command line:

|

||||

<pre><code>curl -X POST 'http://localhost:8080/druid/v2/?pretty' -H 'content-type: application/json' -d @timeseries_query.body</code></pre>

|

||||

|

||||

```

|

||||

curl -X POST 'http://localhost:8080/druid/v2/?pretty' -H 'content-type: application/json' -d @timeseries_query.body

|

||||

```

|

||||

|

||||

Once again, you should get a JSON blob of text back with your results, that looks something like this:

|

||||

|

||||

<pre><code>[ {

|

||||

```json

|

||||

[ {

|

||||

"timestamp" : "2013-06-10T19:09:00.000Z",

|

||||

"result" : {

|

||||

"tweets" : 358562.0,

|

||||

"rows" : 272271

|

||||

}

|

||||

} ]

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

If you issue the query again, you should notice your results updating.

|

||||

|

||||

|

|

@ -216,7 +251,8 @@ Right now all the results you are getting back are being aggregated into a singl

|

|||

|

||||

If you loudly exclaimed "we can change granularity to minute", you are absolutely correct again! We can specify different granularities to bucket our results, like so:

|

||||

|

||||

<pre><code>{

|

||||

```json

|

||||

{

|

||||

"queryType":"timeseries",

|

||||

"dataSource":"twitterstream",

|

||||

"intervals":["2010-01-01/2020-01-01"],

|

||||

|

|

@ -226,11 +262,12 @@ If you loudly exclaimed "we can change granularity to minute", you are absolutel

|

|||

{ "type": "doubleSum", "fieldName": "tweets", "name": "tweets"}

|

||||

]

|

||||

}

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

This gives us something like the following:

|

||||

|

||||

<pre><code>[ {

|

||||

```json

|

||||

[ {

|

||||

"timestamp" : "2013-06-10T19:09:00.000Z",

|

||||

"result" : {

|

||||

"tweets" : 2650.0,

|

||||

|

|

@ -250,16 +287,21 @@ This gives us something like the following:

|

|||

}

|

||||

},

|

||||

...

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

h2. Solving a Problem

|

||||

# Solving a Problem

|

||||

|

||||

One of Druid's main powers (see what we did there?) is to provide answers to problems, so let's pose a problem. What if we wanted to know what the top hash tags are, ordered by the number tweets, where the language is english, over the last few minutes you've been reading this tutorial? To solve this problem, we have to return to the query we introduced at the very beginning of this tutorial, the "GroupByQuery":GroupByQuery.html. It would be nice if we could group by results by dimension value and somehow sort those results... and it turns out we can!

|

||||

|

||||

Let's create the file:

|

||||

<pre>group_by_query.body</pre>

|

||||

|

||||

```

|

||||

group_by_query.body

|

||||

```

|

||||

and put the following in there:

|

||||

<pre><code>{

|

||||

|

||||

```json

|

||||

{

|

||||

"queryType": "groupBy",

|

||||

"dataSource": "twitterstream",

|

||||

"granularity": "all",

|

||||

|

|

@ -271,16 +313,20 @@ and put the following in there:

|

|||

"filter": {"type": "selector", "dimension": "lang", "value": "en" },

|

||||

"intervals":["2012-10-01T00:00/2020-01-01T00"]

|

||||

}

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

Woah! Our query just got a way more complicated. Now we have these "Filters":Filters.html things and this "OrderBy":OrderBy.html thing. Fear not, it turns out the new objects we've introduced to our query can help define the format of our results and provide an answer to our question.

|

||||

|

||||

If you issue the query:

|

||||

<pre><code>curl -X POST 'http://localhost:8080/druid/v2/?pretty' -H 'content-type: application/json' -d @group_by_query.body</code></pre>

|

||||

|

||||

```

|

||||

curl -X POST 'http://localhost:8080/druid/v2/?pretty' -H 'content-type: application/json' -d @group_by_query.body

|

||||

```

|

||||

|

||||

You should hopefully see an answer to our question. For my twitter stream, it looks like this:

|

||||

|

||||

<pre><code>[ {

|

||||

```json

|

||||

[ {

|

||||

"version" : "v1",

|

||||

"timestamp" : "2012-10-01T00:00:00.000Z",

|

||||

"event" : {

|

||||

|

|

@ -316,12 +362,12 @@ You should hopefully see an answer to our question. For my twitter stream, it lo

|

|||

"htags" : "IDidntTextYouBackBecause"

|

||||

}

|

||||

} ]

|

||||

</code></pre>

|

||||

```

|

||||

|

||||

Feel free to tweak other query parameters to answer other questions you may have about the data.

|

||||

|

||||

h2. Additional Information

|

||||

# Additional Information

|

||||

|

||||

This tutorial is merely showcasing a small fraction of what Druid can do. Next, continue on to "The Druid Cluster":./Tutorial:-The-Druid-Cluster.html.

|

||||

This tutorial is merely showcasing a small fraction of what Druid can do. Next, continue on to [The Druid Cluster](./Tutorial:-The-Druid-Cluster.html).

|

||||

|

||||

And thus concludes our journey! Hopefully you learned a thing or two about Druid real-time ingestion, querying Druid, and how Druid can be used to solve problems. If you have additional questions, feel free to post in our "google groups page":http://www.groups.google.com/forum/#!forum/druid-development.

|

||||

And thus concludes our journey! Hopefully you learned a thing or two about Druid real-time ingestion, querying Druid, and how Druid can be used to solve problems. If you have additional questions, feel free to post in our [google groups page](http://www.groups.google.com/forum/#!forum/druid-development).

|

||||

|

|

@ -22,12 +22,6 @@ h2. Configuration

|

|||

* "Broker":Broker-Config.html

|

||||

* "Indexing Service":Indexing-Service-Config.html

|

||||

|

||||

h2. Operations

|

||||

* "Extending Druid":./Modules.html

|

||||

* "Cluster Setup":./Cluster-setup.html

|

||||

* "Booting a Production Cluster":./Booting-a-production-cluster.html

|

||||

* "Performance FAQ":./Performance-FAQ.html

|

||||

|

||||

h2. Data Ingestion

|

||||

* "Realtime":./Realtime-ingestion.html

|

||||

* "Batch":./Batch-ingestion.html

|

||||

|

|

@ -36,6 +30,12 @@ h2. Data Ingestion

|

|||

* "Data Formats":./Data_formats.html

|

||||

* "Ingestion FAQ":./Ingestion-FAQ.html

|

||||

|

||||

h2. Operations

|

||||

* "Extending Druid":./Modules.html

|

||||

* "Cluster Setup":./Cluster-setup.html

|

||||

* "Booting a Production Cluster":./Booting-a-production-cluster.html

|

||||

* "Performance FAQ":./Performance-FAQ.html

|

||||

|

||||

h2. Querying

|

||||

* "Querying":./Querying.html

|

||||

** "Filters":./Filters.html

|

||||

|

|

@ -75,6 +75,7 @@ h2. Architecture

|

|||

h2. Experimental

|

||||

* "About Experimental Features":./About-Experimental-Features.html

|

||||

* "Geographic Queries":./GeographicQueries.html

|

||||

* "Select Query":./SelectQuery.html

|

||||

|

||||

h2. Development

|

||||

* "Versioning":./Versioning.html

|

||||

|

|

|

|||

|

|

@ -4,7 +4,7 @@ druid.port=8081

|

|||

|

||||

druid.zk.service.host=localhost

|

||||

|

||||

druid.extensions.coordinates=["io.druid.extensions:druid-s3-extensions:0.6.81"]

|

||||

druid.extensions.coordinates=["io.druid.extensions:druid-s3-extensions:0.6.101"]

|

||||

|

||||

# Dummy read only AWS account (used to download example data)

|

||||

druid.s3.secretKey=QyyfVZ7llSiRg6Qcrql1eEUG7buFpAK6T6engr1b

|

||||

|

|

|

|||

|

|

@ -4,7 +4,7 @@ druid.port=8083

|

|||

|

||||

druid.zk.service.host=localhost

|

||||

|

||||

druid.extensions.coordinates=["io.druid.extensions:druid-examples:0.6.81","io.druid.extensions:druid-kafka-seven:0.6.81","io.druid.extensions:druid-rabbitmq:0.6.81"]

|

||||

druid.extensions.coordinates=["io.druid.extensions:druid-examples:0.6.101","io.druid.extensions:druid-kafka-seven:0.6.101","io.druid.extensions:druid-rabbitmq:0.6.101"]

|

||||

|

||||

# Change this config to db to hand off to the rest of the Druid cluster

|

||||

druid.publish.type=noop

|

||||

|

|

|

|||

|

|

@ -28,7 +28,7 @@

|

|||

<parent>

|

||||

<groupId>io.druid</groupId>

|

||||

<artifactId>druid</artifactId>

|

||||

<version>0.6.83-SNAPSHOT</version>

|

||||

<version>0.6.102-SNAPSHOT</version>

|

||||

</parent>

|

||||

|

||||

<dependencies>

|

||||

|

|

@ -58,6 +58,11 @@

|

|||

<artifactId>twitter4j-stream</artifactId>

|

||||

<version>3.0.3</version>

|

||||

</dependency>

|

||||

<dependency>

|

||||

<groupId>commons-validator</groupId>

|

||||

<artifactId>commons-validator</artifactId>

|

||||

<version>1.4.0</version>

|

||||

</dependency>

|

||||

|

||||

<!-- For tests! -->

|

||||

<dependency>

|

||||

|

|

@ -82,14 +87,14 @@

|

|||

${project.build.directory}/${project.artifactId}-${project.version}-selfcontained.jar

|

||||

</outputFile>

|

||||

<filters>

|

||||

<filter>

|

||||

<artifact>*:*</artifact>

|

||||

<excludes>

|

||||

<exclude>META-INF/*.SF</exclude>

|

||||

<exclude>META-INF/*.DSA</exclude>

|

||||

<exclude>META-INF/*.RSA</exclude>

|

||||

</excludes>

|

||||

</filter>

|

||||

<filter>

|

||||

<artifact>*:*</artifact>

|

||||

<excludes>

|

||||

<exclude>META-INF/*.SF</exclude>

|

||||

<exclude>META-INF/*.DSA</exclude>

|

||||

<exclude>META-INF/*.RSA</exclude>

|

||||

</excludes>

|

||||

</filter>

|

||||

</filters>

|

||||

</configuration>

|

||||

</execution>

|

||||

|

|

|

|||

|

|

@ -19,8 +19,11 @@

|

|||

|

||||

package io.druid.examples.web;

|

||||

|

||||

import com.google.api.client.repackaged.com.google.common.base.Throwables;

|

||||

import com.google.common.base.Preconditions;

|

||||